153: Nvidia (x4!), Stripe IPO, Standardized Units of Exercise (SUE), Ho Nam & Fun as Competitive Advantage, Ted Weschler’s Retirement Account, and Psilocybin vs Depression

"Everybody has default modes"

The best investors are not investors at all, they are entrepreneurs who have never sold.

—Nick Sleep

🐦 Friend-of-the-show and supporter (💚🥃) Elliot Turner tweeted about the difference between Instagram and Twitter, and it made me think of one of the defining characteristics of Twitter: its wider dispersion of outcomes for users (especially new users, since on-boarding is particularly challenging).

In other words, the worst of Twitter is much worse, but the best is unrivalled.

On Instagram, even if you have no idea what you're doing, you'll probably just end up following a bunch of pretty people trying to sell you products from their sponsored posts and Shopify stores.

On Twitter, who knows where you’ll end up after the initial “stumbling in the dark” period… Either with a super-boring feed just full of big accounts with millions of followers and fortune cookie tweets, or maybe a non-stop barrage of aggressive political trolls and Bitcoin laser-eyed salespeople or whatever.

But if you successfully figure out how to find your own tribe and plug into the little corner of the interest graph that fits you like a glove, you’ll probably never leave, and it may totally change your life and create deep friendships.

In other words, Insta is a big more like investing in a basket of blue chips while Twitter is more like doing angel investments in startups.

💪 🚴🏻 🏃♂️ Everybody has default modes of operation.

With some people, you give them free time, and they’ll be moving around, play sports outside with friends, or go to the gym to exercise, do some laps at the pool, and then feel great about it — “can’t wait to get to do this again!”.

You give me some free time, and I’ll be sitting here at the computer reading stuff, or reading a book.

I have to combat this inertia if I don’t want to turn into a mollusk over time.

That’s why I found some bodyweight exercises that I can do anywhere, with as little friction as possible (ie. I screwed a pull-up bar in my office’s doorframe), and I created a simple system of incentives for myself. It’s all about the:

🎖 Standardized Unit of Exercise 🎖I’ll call it the SUE, for short.

The way I’m wired, I know that I do better when I track things than if I just play it by ear, and that if I get long streaks, I feel even more motivated to keep it going (the old Jerry Seinfeld trick).

That’s why I track what I eat (calories and macronutrient ratios), I track my intermittent fasting (18:6 on weekdays + longer fasts every few months), and I’ve created a system to track exercise.

Nerdy gym rats probably have complex spreadsheets for each exercise, counting reps and everything, but I’m not like that, I just want as little friction as possible, hence the SUE.

The idea is to create a system where anything I do “earns” the same currency, and by adjusting the “price” of various types of exercise, I can nudge myself into doing more of what I should be doing more of.

Incentives 101.

I got this tally app for my phone, which very easily allows me increment SUEs, and then I created a “price” list for exercises.

For example, 1 kilometer of walking is worth 1 SUE, 10 push ups is worth 1 SUE, 4 pull-ups is worth 1 SUE, 10 full squats is worth 1 SUE, etc.

If I feel like I’m neglecting something — for example, I just walk a lot and never do the other exercises — I can adjust the “value" of walking downward, or increase the value of other things, and that’ll nudge me to do more of them (because I’m both lazy and wired for efficiency/optimization).

Every Monday morning, I have a calendar alert that reminds me to reset my count for the week and dump the results in a spreadsheet. There’s a graph of my SUEs over time, and if things dip too much, I know I have to do something or risk losing momentum (which has been the challenging during the pandemic).

It works for me ¯\_(ツ)_/¯

💚 🥃 I figure that the price of a couple coffees or one alcoholic drink isn't a bad trade for 12 emails per month full of eclectic ideas and investing/tech analysis.

The entertainment has to be worth something, but for those that care most about the bottom line:

If you make just one good investment decision per year because of something you learn here (or avoid one bad decision — don’t forget preventing negatives!), it'll pay for multiple years of subscriptions (or multiple lifetimes).

As Bezos would say of Prime, you’d be downright irresponsible not to be a member, it takes 19 seconds (3 on mobile with Apple/Google Pay — just make sure you’re logged-in Substack):

Investing & Business

Stripe One Step Closer to Direct Listing IPO

[Stripe has] taken its first major step toward a stock market debut by hiring a law firm to help with preparations [...]

The 11-year-old company, which was valued by investors at $95 billion in a fundraising round in March [...]

no decision on the timing of the stock market debut, and the next step would be the hiring of investment banks later this year. The listing would be unlikely to happen this year, two of the sources said. [...]

Stripe is considering going public through a direct listing, rather than a traditional IPO, because it does not need to raise money (Source)

🤔

Nvidia: ‘It is a fantastic company, but it is not currently worth more than $500B’

Dylan Patel has a piece that argues that Nvidia is currently overvalued and the stock is about to correct in a similar way to what it has done in recent past.

He makes some pretty good arguments based on the market misunderstanding cyclical dynamics in data-center and gaming, as well as the impact of crypto (which is going away as Ethereum goes proof-of-stake, or at least ASIC), etc. On top of that, the fact that the market tends to rise on news of a stock split and then correct after the split actually happens…

Maybe Dylan’s right, maybe he isn’t. Always hard to predict the short-term.

What I find most interesting about his piece, though, is what he says about the company, its products, and its strategy:

Nvidia is the backbone of the Artificial Intelligence revolution.

Nvidia is the only firm providing vertically integrated artificial intelligence solutions for the datacenter, edge, robotics, healthcare, the digital metaverse, and manufacturing.

Nvidia is the only semiconductor firm that actually understands software.

Nvidia is the only firm that is cloud native, but understands its biggest competitors are the cloud giants.

Nvidia is the only semiconductor firm that is fighting against these cloud giants by allying with on premises, enterprise, and edge.

Nvidia has vision.

And Nvidia is overvalued.

Jensen Huang’s vision for computing is spot on, but the fundamentals of this company do not reflect the stock price. We are big fans of Nvidia. Their solutions are ingenious. Many of their competitors are being left in the dust because they are not prepared for the AI & data-centric world.

It is a fantastic company, but it is not currently worth more than $500B.

Someone looking out for the next 6-12 months will look at the lines in bold, while someone looking out to the next 5 to 10 years will look more at the non-bolded lines.

Funny how the same thing can be read different ways. And they can both be right (at what they’re doing, in the same way that someone who bought Nvidia in 2017 or 2018 during the last “bubble” and held to today may have gotten a 40-60% CAGR since, and someone who sold then may have avoided a big drawdown, though they may not have bought back at that lower price…)

John Carmack on AI Accelerators

Carmack is one of my favorite engineers/programmers.

I grew up on Doom/Doom 2, playing with my friends at LAN parties in my parents’ basement, spending my free time building my own maps, etc. Then came Quake and Quake 2…

The book ‘Masters of Doom’ about the early days of id Software is a great read, for anyone nostalgic for that era and these early first-person shooters, as well as the breakthrough 3D rendering and texture mapping that could run fast on a 486 DX2/66mhz with 8 megs of RAM…

Anyway, this is just context to share this recent tweet by Carmack, who’s now working on virtual reality at Occulus:

I am provisionally not very interested in custom AI accelerators, because I don't think the extra performance will outweigh the reduced flexibility and immature software compared to GPUs. If they do start to gain traction, Nvidia has plenty of room to just cut prices and squeeze.

This was in regards to cluster scale AGI research — on-chip tensor accelerators are definitely valuable!

A good reminder that it’s not just about some TOPS number on a benchmark, but about the whole package: Dev tools, software ecosystem, ease of development, etc.

If you buy some exotic specialized ML accelerator that gives you better performance/$ than Nvidia’s GPUs, but your coders have to re-learn a whole new way to do things, and they can’t do things they used to be able to do because the tools/platform isn’t as mature, etc, that may not be such a good deal. Engineers are expensive too, and their time and productivity level are assets worth optimizing for.

And the Best-Selling Game Console is…

Speaking of Nvidia, I liked this line by CEO Jensen Huang, putting into perspective the sales of gaming-able laptops:

If you think about RTX laptops as a game console, it’s the largest game console in the world. There are more RTX laptops shipped each year than game consoles. If you were to compare the performance of a game console to an RTX, even an RTX 3060 would be 30-50% faster than a PlayStation 5. We have a game console, literally, in this little thin notebook, which is one of the reasons it’s selling so well. The same laptop also brings with it all of the software stacks and rendering stacks necessary for design applications, like Adobe and Autodesk and all of these wonderful design and creative tools. The RTX laptop, RTX 3080Ti, RTX 3070Ti, and a whole bunch of new games, that was one major announcement.

Enterprise AI & Industrial Edge, Nvidia Edition

From the same interview, but different topic (so different sub-head):

AI has made tremendous breakthroughs, but has largely been used by the internet companies, the cloud service providers and internet services. What we announced at GTC initially a few weeks ago, and then what we announced at Computex, is a brand new platform that’s called Nvidia Certified AI for Enterprise. Nvidia Certified systems running a software stack we call Nvidia AI Enterprise. The software stack makes it possible to achieve world class capabilities in AI with a bunch of tools and pre-trained AI models. A pre-trained AI model is like a new college grad. They got a bunch of education. They’re trained. But you have to adapt them into your job and to your profession, your industry. But they’re pre-trained and really smart. They’re smart at image recognition, at language understanding, and so on.

Pre-trained models as “smart college grads”. That’s some nice framing/idea compression here, from the master.

How would you use it? Health care would use it for image recognition in radiology, for example. Retail will use it for automatic checkout. Warehouses and logistics, moving products, tracking inventory automatically. Cities would use these to monitor traffic. Airports would use it in case someone lost baggage, it could instantly find it. There are all kinds of applications for AI in enterprises. I expect enterprise AI, what some people call the industrial edge, will be the largest opportunity of all. It’ll be the largest AI opportunity.

Indeed, the big FANG companies are extremely visible, but compared to everything else they’re still pretty small. When all kinds of companies and industries and governments/municipalities start using AI — even if not in ways that are as sophisticated — they’ll be consuming a lot of it.

Data-centers everywhere:

here’s my prediction. Every datacenter and every server will be accelerated. The GPU is the ideal accelerator for these general purpose applications. There will be hundreds of millions of datacenters. Not just 100 datacenters or 1,000 datacenters, but 100 million. The datacenters will be in retail stores, in 5G base stations, in warehouses, in schools and banks and airports. They’ll be everywhere. Street corners. They will all be datacenters. The market opportunity is quite large. This is the largest market opportunity the IT industry has ever seen.

It may not be as fancy, but it’ll be by the gigaton!

…

After 4 different sections on Nvidia in the same edition, I’m thinking of changing the name of this newsletter:

Ted Weschler’s Retirement Account

I didn’t pay attention to this one too closely because, well, the outrage part of it is boring (context here — ProPublica has a piece implying some impropriety, mentioning Weschler by name, because his retirement account had $264.4 million in 2018), but what’s interesting is that Ted Weschler ended up writing a public letter to explain how he got there over the past 35 years, and it’s kinda bonkers:

He compounded his account at something like 30% CAGR for 35 years. Still low compared to Peter Thiel (though a shorter run), but Thiel didn’t do it with publicly traded stock, so it’s a totally different animal.

Weschler closes by saying that he supports capping retirement accounts that exceed a certain amount. h/t Cundill Capital

✨ Interview: Ho Nam, Altos Ventures ✨

I really enjoyed this podcast with Ho Nam by the Acquired guys.

Truly a delightful conversation, full of great stories and insights.

I love people who are just obviously having a blast playing this great game of learning how things work and how to improve, and helping others win alongside them. This is the kind of investing that I like to learn about.

Ho Nam from Altos Ventures — A Different Approach to VC (this link has a transcript too!)

On Twitter, Ho Nam told me:

Having a blast is key. People thought I was having fun even when they thought we were struggling. Or perhaps failing. Of course it’s never that easy. But so important to enjoy the journey and surround yourself with good people. It’s still lonely quite often, but it does help.

This is key on multiple levels, because on the 1st, having fun = better life. That's obvious.

But 2nd level, having fun is a competitive advantage. Not only does it probably means you’ll put more hours into something and will be thinking about it even on your “time off”, but the benefits of compounding come at the 11th hour, so anything that helps you stick with it longer than others helps.

Fun is like that — in other words, a non-tap-dancing-to-work Buffett wouldn't have made it to being the Buffett we know. 🥳

Science & Technology

Wing-Like Sails Could Boost Cargo Ship Fuel Efficiency by 20%

I’ve been reading about these types of experiments for cargo ships for probably 15 years. Giant flying kites that pull the ships by harnessing high-altitude winds and such.

Glad to see it’s still being looked into, and hopefully at some point a version of wind-tech for cargo ships is practical and effective enough to be widely deployed.

The above is from Michelin (yes, the French tire-maker), and hopefully it’s not just a PR exercise in greenwashing but they’re actually intending to give it a real try:

The set-up operates with the push of a button. First, the telescopic mast rises from its base, reaching up to 17 meters high. The wing, which starts as a pile of fabric, slowly unfurls as a small air compressor inflates the double-sided material. As wind flows over the 93-square-meter wing, the variations in air pressure create lift, helping propel the vessel forward. When the ship approaches a bridge or encounters rough weather, the system automatically retracts.

Michelin estimates the wing can improve a ship’s fuel efficiency by up to 20 percent, based on measurements from technical tests and simulations, said Benoit Baisle-Dailliez, who leads Michelin’s WISAMO initiative. For a large container ship, that could mean avoiding burning tens of thousands of liters of fuel on a given day. The company plans to test the technology on a commercial freighter in 2022.

More details here. h/t Friend-of-the-show and supporter (💚🥃) Brad Slingerlend

Psilocybin ‘spurs growth of neural connections lost in depression’

I love how the #ShroomBoom studies keep rolling in:

In a new study, Yale researchers show that a single dose of psilocybin given to mice prompted an immediate and long-lasting increase in connections between neurons. [...]

Previous laboratory experiments had shown promise that psilocybin, as well as the anesthetic ketamine, can decrease depression. The new Yale research found that these compounds increase the density of dendritic spines, small protrusions found on nerve cells which aid in the transmission of information between neurons. Chronic stress and depression are known to reduce the number of these neuronal connections. [...]

They found increases in the number of dendritic spines and in their size within 24 hours of administration of psilocybin. These changes were still present a month later. Also, mice subjected to stress showed behavioral improvements and increased neurotransmitter activity after being given psilocybin. [...]

“It was a real surprise to see such enduring changes from just one dose of psilocybin,” he said. “These new connections may be the structural changes the brain uses to store new experiences.” (Source)

SiFive’s P550 is Fastest RISC-V CPU (yet)

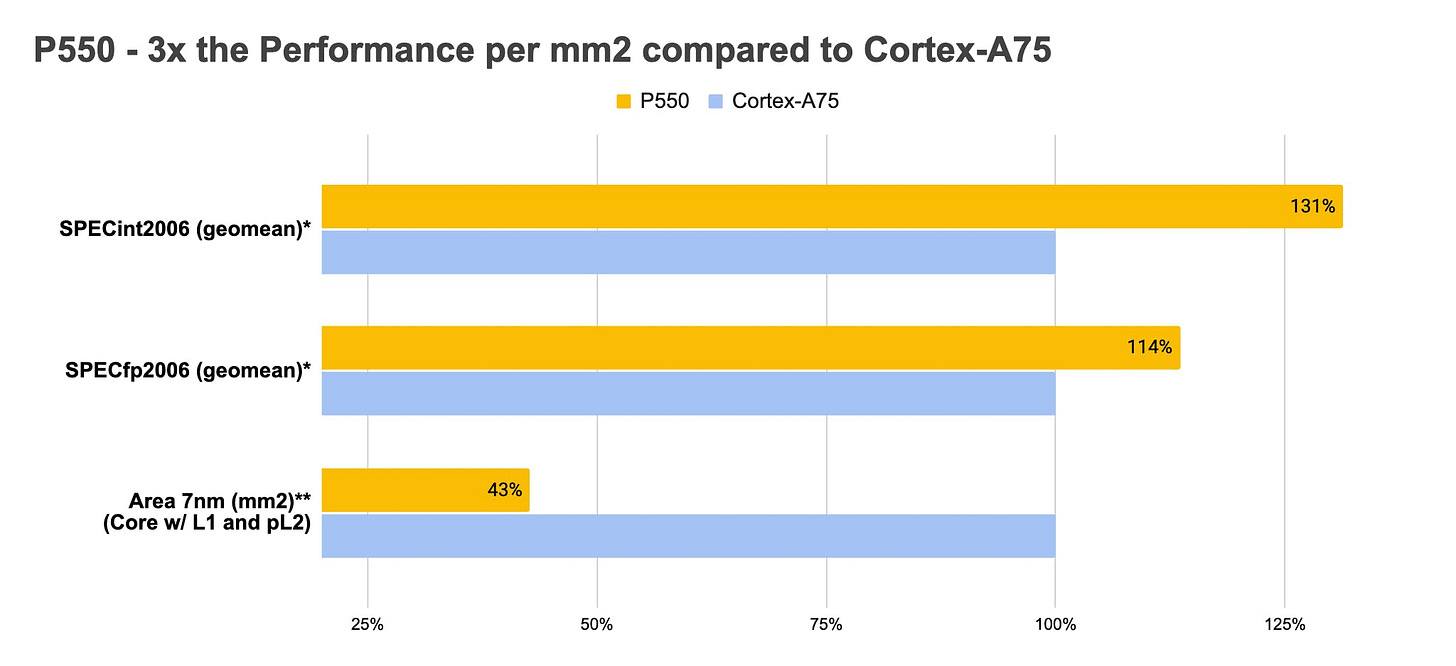

SiFive's new Performance P550 core features a 13-stage, triple-issue, out-of-order pipeline. SiFive claims that a four-core P550-based CPU takes up roughly the same on-die area as a single Arm Cortex-A75, with a significant performance advantage over that competing Arm design. SiFive says the P550 delivers 8.65 SPECInt 2006 per GHz, based on internal engineering test results—a laudable result when compared to Cortex-A75 (and not too far behind an i9-10900K's 11.08/GHz). But it's well behind an Apple A14's 21.1/GHz.

Here’s a comparison with ARM’s Cortex-A75 (which, to be fair, was launched in 2017 and isn’t the latest ARM core — this chart would look different vs the Cortex-A78…):

What I find particularly interesting is that last one. It shows the die area for each (note that the graph isn’t starting from 0%). The P550 is less than half the area of the A75, showing how transistor-efficient the design is.

Apple vs Intel Cores

Kinda unrelated, but if you want to see something beautiful, check out this chart that plots different Intel (blue squares) and Apple (gray dots) cores by performance over time:

Like freaking clockwork! And these are mobile cores vs cores designed to be plugged in the wall!

The Arts & History

Charcoal Speed-Drawing

I love this stuff. Seeing how from almost nothing something so vivid is created so quickly.

Ted Weschler’s response to his Retirement Account balance!! 😁💪🙌🙏🍺