459: Constellation Xmas, Mark Leonard GPT, Apple's 36% Google Cut, Meta + Amazon, Nvidia H200, Brad Jacobs, LNG, and Sopranos

"M&A is one of the most dangerous tools in business"

If thoughts, prayers, and condemnation prevented terror attacks and dictators waging war we'd be living in a paradise.

But they don't. They must actually be fought like hell, as if our civilization depends on it, because it does.

–Garry Kasparov

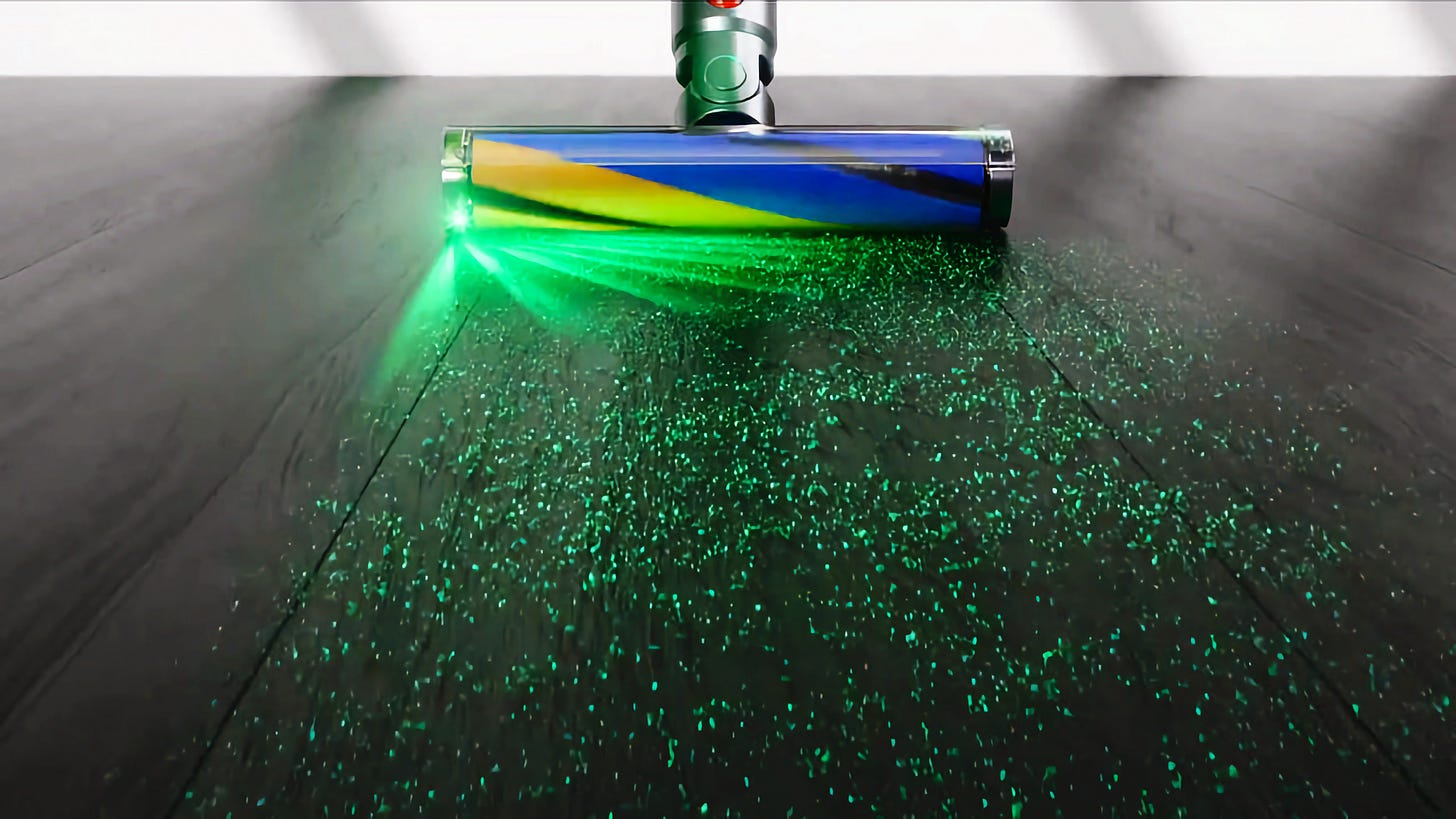

🧹🔍 A few months ago, I bought a Dyson V15 (on sale, directly from Dyson).

Native advertising works!

There’s no doubt that my friend David Senra’s (📚🎙️) multiple episodes about James Dyson, as well as his constant references to him in episodes about other people, had primed me to buy one.

First impressions: Switching from my old, corded, bulky Hoover vacuum (at least it had a cyclone system and was bagless, so it wasn’t entirely prehistoric) to the Dyson felt a bit like going from a Model T to a spaceship — not too dissimilar than it recently felt going from a gasoline car to an EV, or going from wired headphones to AirPods.

It’s stupid, but I found myself coming up with excuses to vacuum, thinking of places where I could use each attachment to try it out. That’s a good sign for a product!

The green laser that shows dirt on the floor is actually useful AND fun.

Making everyday utility products more fun is such an underrated design principle.

As a kid who loved playing with flashlights in the dark, I quickly realized that if you put the beam parallel to the floor you could see all kinds of stuff that was otherwise invisible to the naked eye. This is the same principle. It makes it so satisfying to pick up dust that it makes me vacuum in the dark.

However, the Dyson is not perfect. If I worked for the company, I could think of ways to improve it.

I keep thinking, what if Apple made this? What would they do differently?

The biggest thing for me is that the plastic can feel a bit cheap and you can feel it flex and hear it creak. The tolerances aren’t as high as they could be, and while plastic is probably the right choice to keep things light, I bet there would be ways to make it feel more solid and premium.

But overall, I recommend it if you’re in the market for a battery-powered vacuum.

📖✍️ Stewart Brand is drafting his next book in public. It’s called “Maintenance: of Everything”.

Here’s the draft of a fascinating chapter about how maintenance plays a role in war, citing all kinds of recent examples from Ukraine. 🇺🇦🛠️

🎮🗡️🛡️🎤 I’m not sure my 9-year-old boy even knows who Taylor Swift is.

Her next album should have a song about Minecraft and/or Zelda!

She’d double her audience! 💰

💚 🥃 If you’re an Extra-Deluxe subscriber (above the normal tier), you will soon get an email from me to set up a group Zoom call with me and other Extra-Deluxe subs. I think it’ll be fun!

(Update: I still haven’t sent the emails… I’m starting to think I may need to do two calls so that people in different time zones can all make it. Plus I just learned that my kids’ school teachers are going on strike next week, so that changes my calendar 😬 I’m trying to think of the best way to survey everyone. You’ll get an email soon if you are Extra-Deluxe, I haven’t forgotten!)

And if we all like it, I may do this on the semi-regular 🗓️

(If you sign up for Extra-Deluxe after the email goes out, don’t worry, I’ll reach out individually and loop you in)

🏦 💰 Liberty Capital 💳 💴

I know what I’m getting my kids for Xmas… 🧙♂️🎄

h/t @NotMarkLeonard (made with DALL-E 3)

For more on Constellation Software:

Custom GPTs based on Mark Leonard and Warren Buffett’s Letters 📑📑📑📑📑📑📑🔍🤖

Speaking of the Northern Wizard, I spun up custom GPTs to learn how they work, and my first thought was to upload PDFs of all his shareholder letters to see what would happen.

Here are the first three answers:

My next experiment involved Warren Buffett’s partnership and Berkshire letters. Here’s the first question I asked.

Of course, these ideas are not new to anyone who has read the letters, but it’s still 🤯 that a piece of software and a bunch of GPUs can “read” these hundreds of pages and distill and reformulate these ideas based on simple one-sentence queries…

Interview: Brad Jacobs, Serial Acquirer 🚚📦📦📦

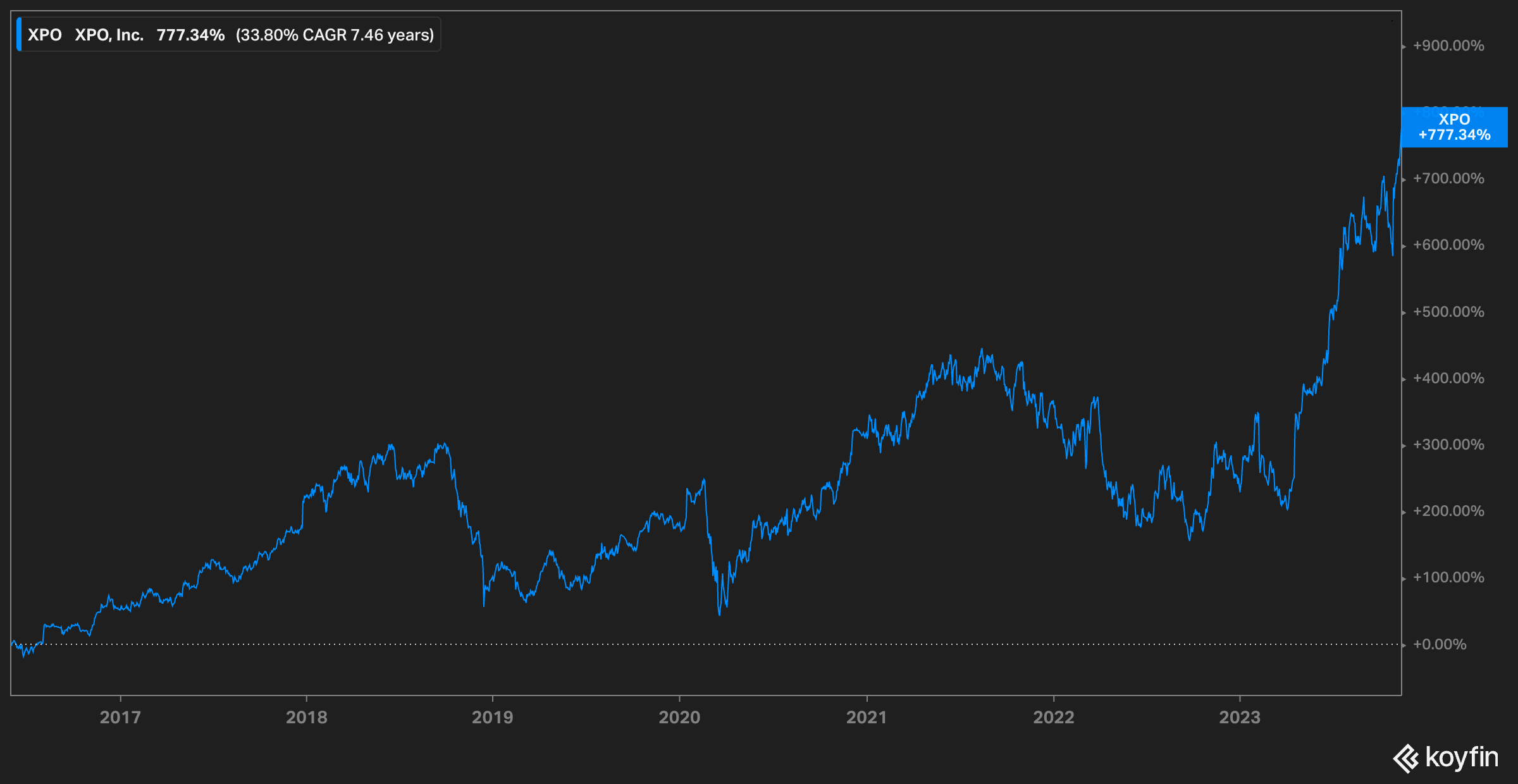

This one brought me down memory lane. Years ago, I studied XPO and briefly owned shares. I had to look at my notes — I bought them in June 2015 but sold them briefly after.

I was learning about the industry and ultimately decided that I didn’t have enough confidence in my understanding of it to own the company. I probably sold to free up the capital to buy something else (I don’t remember what — hopefully it wasn’t something that did terribly, compounding the mistake…).

A 33.80% CAGR since mid-2015…

The XPO stock chart since 2015 only tells part of the story, since the company has recently split itself into three. To know the real return, you have to also factor in the value of the GXO and RXO spin-offs.

Anyway, back to the interview:

It’s an interesting peek into the mind of a serial acquirer. M&A is one of the most dangerous tools in business, but also one of the most powerful when deployed well.

Historically, many of the best-performing companies have added a lot of value through smart M&A *and* many of the biggest disasters have gotten there through dumb M&A…

I also like his approach to using technology in his businesses.

There are two main approaches and both can be effective. Rather than start from what tech is available and then try to see if it can be used to help the business (solution first approach), he starts by figuring out what are the problems in the business — what could be automated, where friction could be reduced, what new offering would customers like? — and then they determine which can be accomplished with tech (problems first approach).

I also really liked his framing of meditation as “thought experiments” with some direction, but also plenty of space to let the mind wander and think through possibilities.

I don’t think I have enough time for that in my life — I’ve written in the past about how I think we need to find the right balance between inputs and outputs, and it’s probably not good to be constantly reading/listening to podcasts and audiobooks/etc.

We need “processing” time to digest, connect, and integrate all those inputs.

🍎💰💰💰💰💰💰Apple Gets 36% of Google Revenue in Search Deal, Expert Witness Reveals in Court (oops)

This apparently wasn’t supposed to come out:

Google pays Apple 36% of the revenue it earns from search advertising made through the Safari browser, the main economics expert for the Alphabet said Monday.

Kevin Murphy, a University of Chicago professor, disclosed the number during his testimony in Google’s defense at the Justice Department’s antitrust trial in Washington.

John Schmidtlein, Google's main litigator, visibly cringed when Murphy said the number, which was supposed to remain confidential.

The jaw-dropping number was confirmed in court yesterday by Sundar Pichai, so it’s super-duper-official.

We’ve long known that Google paid Apple a very large amount in absolute dollars, but I wouldn’t have guessed it was based on such a high percentage of revenues, right off the top.

This is a testament to both how valuable Apple’s customers are, and how valuable being the default choice is.

Even if most users would ultimately switch back to Google, the value per user of that lost cohort would be high enough for Google to decide it is worth spending all this money to keep them around.

As friend-of-the-show Eric Seufert points out:

Based on Microsoft's own modeling, even if Microsoft offered a 100% revenue share to Apple in exchange for default status (remember: this arrangement is priced in terms of % revenue, not a fixed fee!), Google could still outbid it. Entirely a function of performance.🛒🤝📲 Meta + Amazon

Amazon and Meta Platforms, are testing a feature that lets shoppers buy Amazon products directly from ads on Instagram and Facebook.

The initiative, which involves asking consumers to link their Amazon accounts to their social-media profiles, could make Meta more attractive to advertisers and let Amazon attract more shoppers from outside its web store. [...]

US shoppers will see real-time pricing, delivery estimates and product details on select Amazon ads running on Facebook and Instagram

The pieces of the puzzle are still reconfiguring themselves since Apple’s ATT shattered the old regime. The blast radius was large, and new alliances are being formed. (this makes it sound like a Star Wars intro crawl…)

It’s not yet clear how large this partnership will be, but there’s certainly the potential for something BIG if both sides like what they see from this early testing.

Ben Thompson (💚 🥃 🎩) gets to the crux of why this makes sense:

The pay-off is, once gain, a win-win: Meta gets better tracking, and thus better performing ads, while Amazon gets more volume, both as a first order concern in terms of Meta ads, but also as a second order in that letting Meta have the key data will drive even more conversions in the long run. Remember that for all of Amazon ads’ outsized success they are still about skimming money from intent-based search: Meta excels at finding people at the top-of-the-funnel, and introducing them to products they didn’t even know they wanted.

🧪🔬 Liberty Labs 🧬 🔭

🏎️🔥 Nvidia Unveils H200 Souped-Up Hopper GPU

While H100s have been 2023’s status symbol, that’s about to shift to H200s:

The NVIDIA H200 is the first GPU to offer HBM3e — faster, larger memory to fuel the acceleration of generative AI and large language models, while advancing scientific computing for HPC workloads. With HBM3e, the NVIDIA H200 delivers 141GB of memory at 4.8 terabytes per second, nearly double the capacity and 2.4x more bandwidth compared with its predecessor, the NVIDIA A100. [...]

An eight-way HGX H200 provides over 32 petaflops of FP8 deep learning compute and 1.1TB of aggregate high-bandwidth memory for the highest performance in generative AI and HPC applications.

As far as I can tell, the biggest difference between the H100 and H200 is the memory — but that’s a big deal considering how the challenge with these GPUs is to keep them fed with data at all times.

The HBM3e RAM has 4.8 terabytes per second of bandwidth, compared to 3.35 tbps on the H100. Total memory capacity is now 141 gigabytes vs 80GB for the H100.

So bigger and faster 🚀 which leads to pretty significant performance improvements:

The introduction of H200 will lead to further performance leaps, including nearly doubling inference speed on Llama 2, a 70 billion-parameter LLM, compared to the H100. Additional performance leadership and improvements with H200 are expected with future software updates. [...]

Nvidia showed a slide during the announcement that showed performance on the GPT-3 model using the A100 as a benchmark. H100 is 11x faster, and H200 is 18x faster (which is a pretty significant improvement for a GPU that is a refinement of the existing architecture).

🚘‼️🚙 Waze app to highlight accident-prone areas

what would happen if you knew in advance that you were approaching a road that had a history of crashes?

Thanks to AI and reports from the Waze community, crash history alerts combine historical crash data and key information about your route - such as its typical traffic levels, whether it’s a highway or local road, elevation, and more. If your route includes a crash-prone road, we’ll show you an alert before you reach that section of your journey. To minimize distractions, we limit the number of alerts drivers see, and don’t show them on roads they regularly navigate.

I think this is great, and should be rolled out to Google Maps (Waze is also owned by Google) and copied by Apple Maps.

Drivers should always pay attention, but realistically, people have an “attention budget” and they can’t always be in “maximum alertness” mode.

Helping them decide when to be extra careful could be hugely valuable, as there may be a small % of road areas where a majority of accidents take place. Knowing that in advance can no doubt help avoid problems.

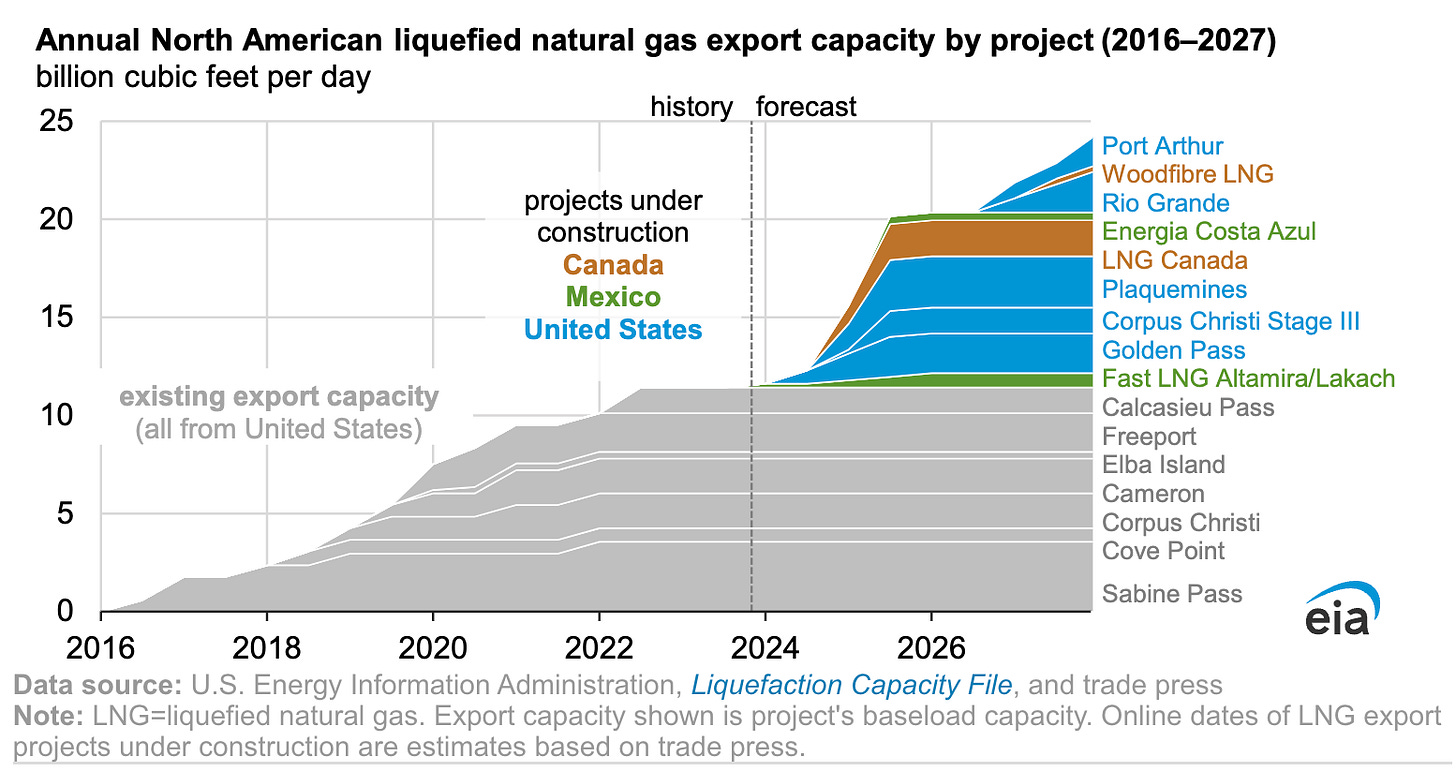

‘LNG export capacity from North America is likely to more than double through 2027’ 🔥📈

The EIA forecasts:

We expect North America’s liquefied natural gas (LNG) export capacity to expand to 24.3 billion cubic feet per day (Bcf/d) from 11.4 Bcf/d today as Mexico and Canada place their first LNG export terminals into service and the United States adds to its existing LNG capacity. By the end of 2027, we estimate LNG export capacity will grow by 1.1 Bcf/d in Mexico, 2.1 Bcf/d in Canada, and 9.7 Bcf/d in the United States from a total of 10 new projects across the three countries.

Most of these new terminals will be built around the Gulf of Mexico.

🎨 🎭 Liberty Studio 👩🎨 🎥

🍷🍝 Rewatching ‘The Sopranos’ 📺

Recently, my wife and I watched the first ‘Sopranos’ episode. I was due for a rewatch of the series and she has never seen it.

I had forgotten how much stuff is in the pilot, how many characters, how many threads. It’s like a mini-movie!

It’s been a really long time since I watched the series, 10+ years. I’m really looking forward to rediscovering it.

🎬 Storytelling Entropy, Nostalgia Baiting, Irony Poisoning, and the Marvelization of Cinema 🍿

This video essay is about how cinema turned into content, and how some good ideas pushed too far — or misunderstood — can become bad ideas.

I think you may find this interesting related to your LNG information. Since the US is such a large LNG exporter there has recently been a backup of vessels at the Panama Canal and LNG vessels are paying a skip-the-line fee to get thru, which of course causes other problems. URL: https://www.freightwaves.com/news/panama-canal-restrictions-are-rerouting-lpg-shipping-flows

The META-AMZN deal may be copying recent success from TikTok shop directing e-commerce to various vendors like temu

Im trying to figure out who the winners of HBM will be out of Sk hynix, micron, Samsung, maybe some rambus?