617: Claude's Moment, Jensen's Chequebook, GPT-5.4, Nvidia + HBM, OpenAI + The Trade Desk, John Arnold, Cursor the Next Perplexity?, Sony, and Guitar Shapes

"Friction is a tax on good habits."

A good way to study a phenomenon is to see what happens when it disappears.

—Anil Seth

🛀💭🎸🏋️ Sometimes I find it almost embarrassing how much friction matters.

I own both an acoustic and an electric guitar, and I pick up the acoustic ten times more.

Why?

Not because I love it more, but because it’s instant: grab it and play.

The electric has a whole startup ritual: strap, cable, amp, power, adjust volume and tone, etc. It’s not that long. It shouldn’t matter this much. But it does. A surprising amount of life is downstream of tiny frictions.

Friction is a tax on good habits.

That’s why I built a basement gym with a squat rack, dumbbells, and a balance board. If I had to drive to the gym… well 😅

Reduce friction for the things you want more of. Add it for the things you want less of. What's the unplugged guitar in your life?

I know it’s not a novel insight. But a lot of the most powerful ideas aren’t obscure, they’re just hard to keep in mind and implement consistently. This is one.

🏦 💰 Liberty Capital 💳 💴

🚀💰 Claude’s Moment: Anthropic Hits $20bn Run Rate as It Closes the Gap With OpenAI

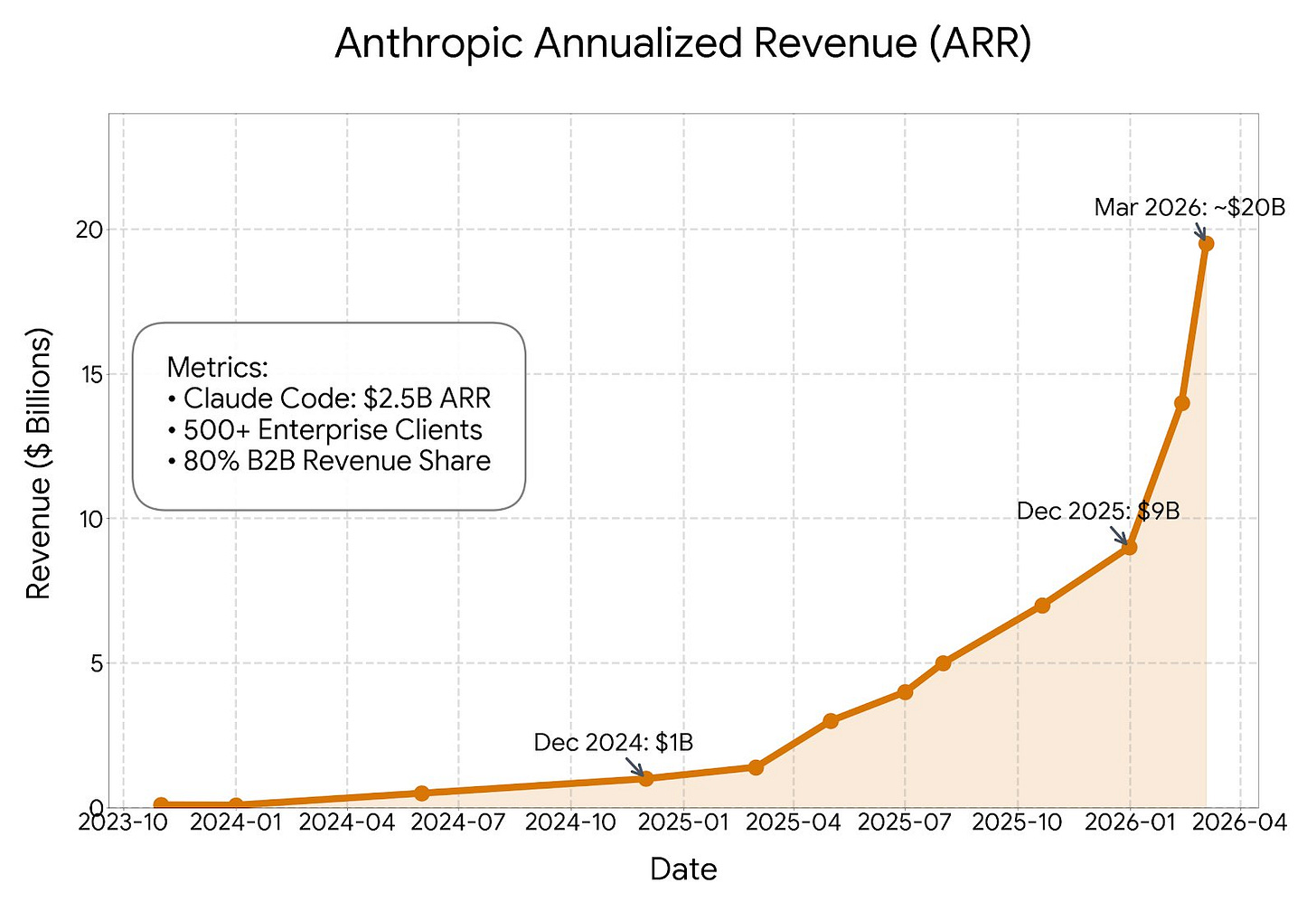

The graph above is pretty incredible. Just think about the scale here: Anthropic’s revenue run rate was reportedly around $1bn at the beginning of 2025, roughly $9bn at the end of 2025, and then, about two months later, it reportedly more than doubled to nearly $20bn. OpenAI, meanwhile, is reportedly at about $25bn run-rate.

This may look like bad uptime at first glance, but most of those red and yellow bars came during a period when Anthropic was adding almost $10bn of run-rate revenue in an *extremely* infrastructure-intensive business. You can’t just toggle on autoscaling on AWS and call it a day.

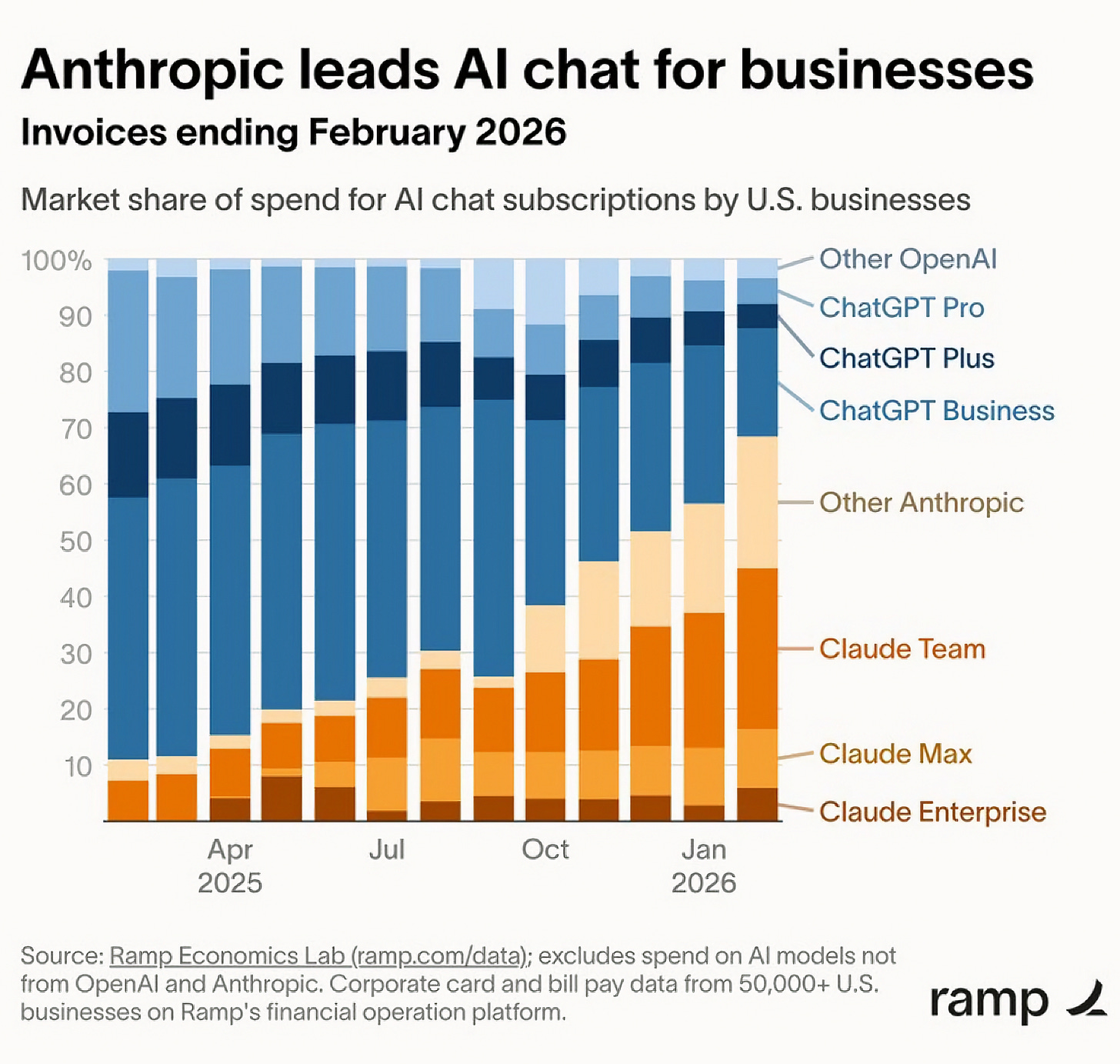

Ramp’s data may not be fully representative because it skews to startups, but it still gives us a directional idea of what is going on. Anthropic has been chewing market share from OpenAI on the paid subscription front, though to be fair, most of these businesses have subscriptions to both.

And that was before the recent drama.

Because of the very public Pentagon SNAFU, Claude went to #1 in the app store for the first time ever as OpenAI faced backlash as people publicly cancelled their paid subs and switched to Claude. Mike Krieger (Instagram co-founder, now CPO at Anthropic) said yesterday that “More than a million people are now signing up for Claude every day.”

Anthropic always seemed like the enterprise play. Will it end up with a real consumer business after all? 🤔

There’s been a pretty big hit to OpenAI’s brand. With both consumers AND researchers, some of whom have left and joined Anthropic. This includes Max Schwarzer, who was VP of Research and the lead of post-training/RL. He helped create the reasoning paradigm with o1 (🍓) and o3, from which all of today’s best models can trace some lineage.

That kind of talent departure may have the longest-lasting impact, but we probably won’t know for a while. There’s always lag between when the work is done and when it shows up in released models.

But you can tell it hurts by how frantic Sam Altman has been at doing damage control (with a lot of it backfiring…) 💣💥

If anything good comes of this, it may be that in the future, there will be a lot more scrutiny from the general public and, hopefully, lawmakers when it comes to things like mass surveillance via AI. 📃🔍

The supply chain risk designation has been confirmed, but with a narrower application than what the SecDef first claimed (which would have been a killshot). All this scrutiny probably means that OpenAI’s deal with DoD will be tighter than it would otherwise be, if only because they don’t want more AI researchers to quit. It’s all about incentives.

Update: Dario wrote an update last night:

The language used by the Department of War in the letter (even supposing it was legally sound) matches our statement on Friday that the vast majority of our customers are unaffected by a supply chain risk designation. With respect to our customers, it plainly applies only to the use of Claude by customers as a direct part of contracts with the Department of War, not all use of Claude by customers who have such contracts.

The Department’s letter has a narrow scope, and this is because the relevant statute (10 USC 3252) is narrow, too. It exists to protect the government rather than to punish a supplier; in fact, the law requires the Secretary of War to use the least restrictive means necessary to accomplish the goal of protecting the supply chain. Even for Department of War contractors, the supply chain risk designation doesn’t (and can’t) limit uses of Claude or business relationships with Anthropic if those are unrelated to their specific Department of War contracts.

That’s not necessarily a win.

Dean W. Ball, who served as a senior AI policy advisor in the Trump White House and was the organizing author of its AI Action Plan, gave some context:

The law was narrower than Hegseth's tweet. The damage may not be.

🐜 Nvidia’s HBM Procurement Edge over EVERYONE

I was listening to the latest episode of The Circuit, where Jay Goldberg and Ben Bajarin discuss the semiconductor industry. They made an interesting point about how the memory crunch may affect ASICs vs Nvidia: