626: ARM's Not Just IP Anymore, From Magnificent 7 to Hateful 8, Benedict Evans, Netflix Pricing, Microsoft, Inference Bandwidth, Snob Sandwich, and Pink Floyd

"You’re a strategy taker, not a strategy setter."

Innovation is the central issue in economic prosperity.

—Michael Porter

🛀💭🚪🤝 Alec Stapp writes:

Landlord offered to renew my lease at the same rent for another 12 months.

Usually I wouldn’t spend the time to negotiate if they’re not trying to raise the rent, but figured I’d let Claude have a go and negotiate on my behalf.

Claude did a market comp analysis and drafted the counteroffer for me.

Landlord just came back and agreed to an 8% decrease in my rent.

Thanks, Claude.Let’s just say the $20/month sub pays for itself :)

What happens when his landlord has an AI negotiate back, and it’s just a bunch of AIs negotiating with each other?

What happens to outcomes? Do they converge faster? Do models collude? Will someone fine-tune AIs that specialize in a specific kind of haggling based on exploiting known weaknesses in the popular chatbots most likely to be used on the other side? 🤔

🤖🔎↔️ Speaking of persuasive AIs, be careful where you point that persuasiveness, because it'll work on you too.

- Drafted a blog post

- Used an LLM to meticulously improve the argument over 4 hours.

- Wow, feeling great, it’s so convincing!

- Fun idea let’s ask it to argue the opposite.

- LLM demolishes the entire argument and convinces me that the opposite is in fact true.

- lol

The LLMs may elicit an opinion when asked but are extremely competent in arguing almost any direction. This is actually super useful as a tool for forming your own opinions, just make sure to ask different directions and be careful with the sycophancy.

Always steelman multiple positions before you make up your mind. Otherwise, your AI-polished conviction might just be the last direction you pointed the model.

In fact, don't leave it to memory. Add a custom instruction so your AI does it automatically, every time, without you having to think about it.

📬💡📺🎬📚❤️🔥 OSV Field Notes is a weekly, high-signal curation of things worth your time. It’s written by my OSV colleagues and me. It exists because there’s so much good stuff out there that never makes it to the algo-controlled slopfests on most apps.

It’s maybe too effective, because every week, I’m adding new things to my reading list and watchlist 😅

🔎📫💚 🥃 Too few readers of this newsletter are paid supporters, and it’s threatening the project's existence.

I’m not saying this to be dramatic. I just want to level with you.

Paid support has declined, even as total readership has continued to climb and now approaches 30,000. 📉📈 (Update: Thank you to those who became supporters in the past couple of weeks ♥️)

If you want it to continue, this is the moment. Become a paid supporter 👇

🏦 💰 Liberty Capital 💳 💴

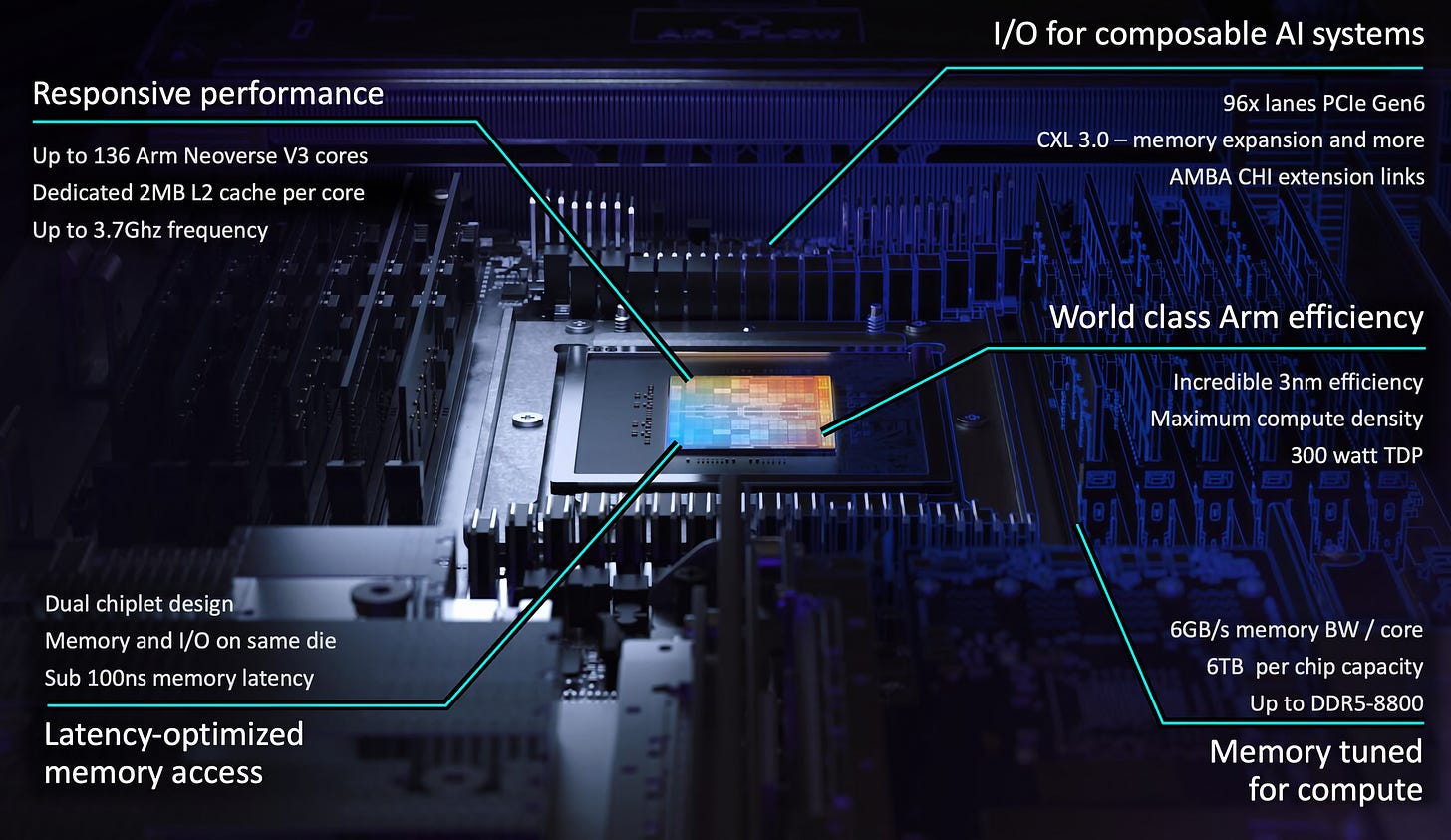

💪 ARM CPU: We’re not just IP anymore! 👨🔬🍰

The least secret secret in the industry was that ARM was working on making its own chips. When Rene Haas became CEO, he clearly wanted ARM to take a bigger slice of the value it creates, and this partial change of business model is the result of that.

As AI is going agentic, the world will need a lot more CPUs. And the ideal chip for AI agents isn’t necessarily the same as before. Nvidia’s Vera CPUs (also ARM-based) are tuned for AI agents, whose usage patterns look nothing like a human hammering the same system. ARM has clearly been thinking along similar lines.

They call it the ARM AGI CPU, and it’s designed to offer a crapload of cores in a relatively low power envelope:

The Arm AGI CPU is designed to deliver high per-task performance at sustained load across thousands of cores in parallel – all within the power and cooling limits of modern data centers. [...]

Arm’s reference server configuration is a 1U, 2-node design – packing in two chips with dedicated memory and I/O for a total of 272 cores per blade. These blades are designed to fully populate a standard air-cooled 36kW rack – 30 blades delivering a total of 8160 cores. Arm has additionally partnered with Supermicro on a liquid-cooled 200kW design capable of housing 336 Arm AGI CPUs for over 45,000 cores.

One notable architectural choice is ARM’s "dedicated core per program thread" model, which the company says delivers 'deterministic performance under sustained load by eliminating throttling and idle threads. This matters when you’re running thousands of parallel agent tasks that each need predictable latency over peak burst performance.

In this configuration, the Arm AGI CPU is capable of delivering more than 2x the performance per rack compared to the latest x86 systems. [this is ARM's figure — Intel predictably disagrees. -Lib]

Arm said Meta will be the chip’s “lead partner” and “co-developer” — one more option for the compute swing voter — with other early customers including OpenAI, Cloudflare, SAP, SK Telecom, and Cerebras.

So many companies were part of the announcement. ARM is clearly threading a needle here, being careful not to compete head-on with its own customers (at least at this stage), and is instead targeting an underserved niche. For now.

See how I resisted making ‘arm’ puns. 😅

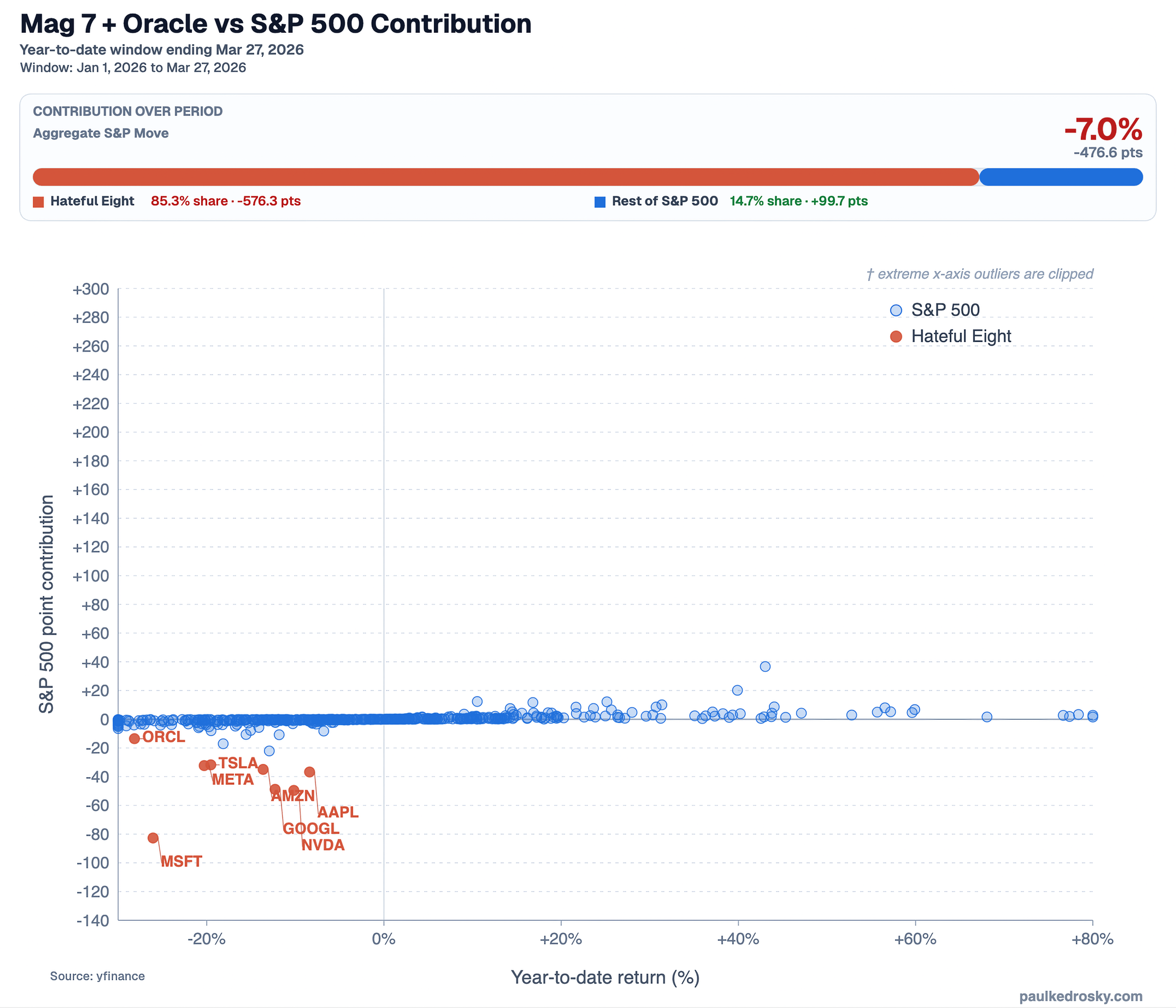

😇📈 From Magnificent 7 to Hateful 8 👿📉

The stocks that drove every S&P gain for two years are now driving almost all of its decline.

Paul Kedrosky made this graph to visualize how much of the S&P 500’s decline is due to the formerly-could-do-no-wrong Big Tech (+Oracle):

The “Hateful Eight” — the Mag 7 + Oracle that drove index gains for the past two years — account for 85% of the year-to-date drawdown, subtracting roughly 576 points. The other ~490 companies collectively added about 100 points.

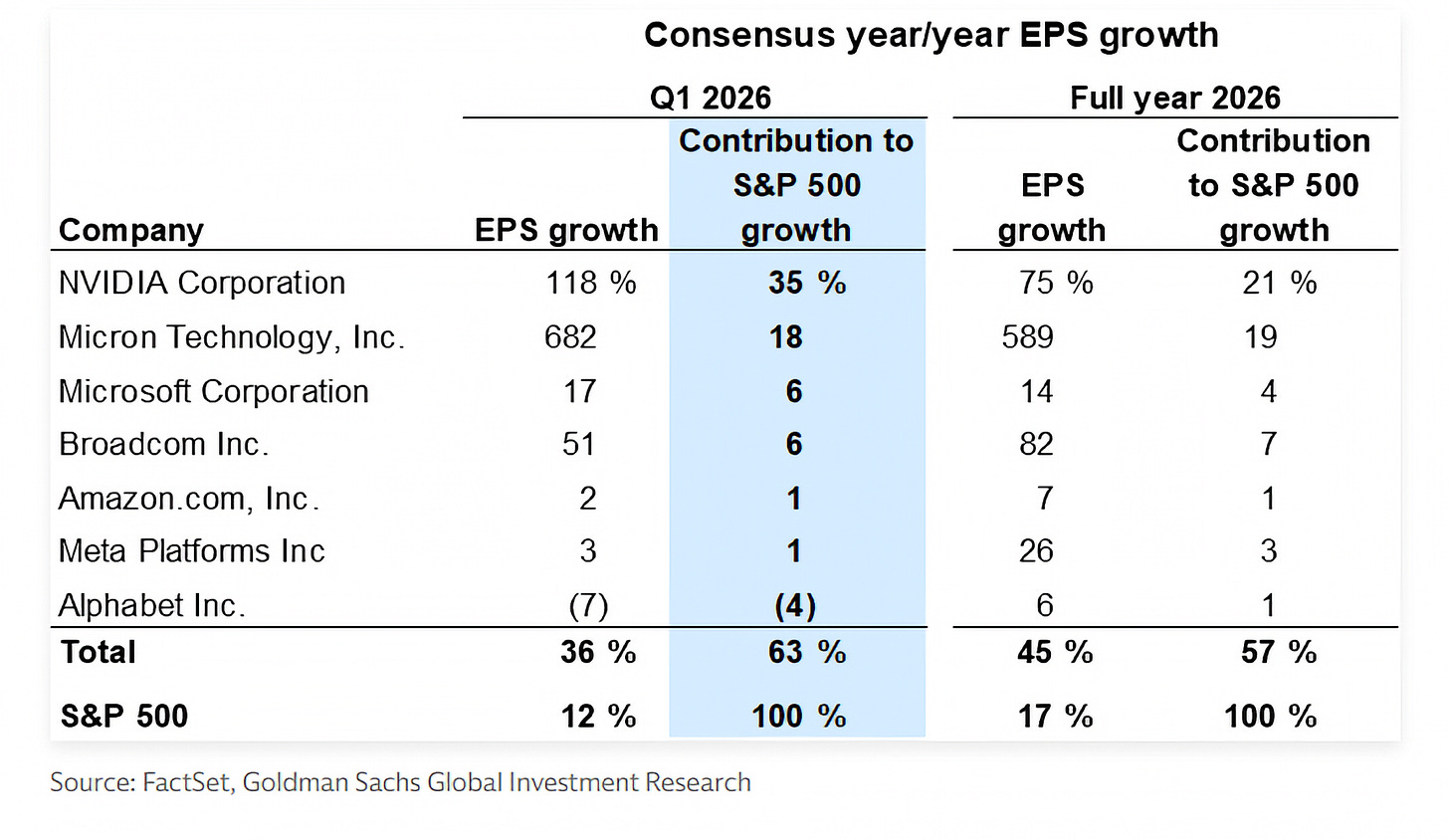

Speaking of concentration: Nvidia alone represents 35% of the S&P’s Q1 earnings growth, while the much smaller Micron ($370bn market cap vs $4.2 trillion for Nvidia) represents 18% thanks to its holy-memory-cycle 682% EPS growth:

🗣️💬 Benedict Evans: 900 Million Users, and Then What? Why Better Models Don't Solve the Real Problem💡

This is to be paired with my highlights from a piece that Ben Evans wrote explaining some of the challenges facing AI labs and the ecosystem that supports them.

I find Evans worth listening to because, in Claude Shannon's terms, he is providing real information. He’s saying things that are different, and sometimes opposite, to what a lot of the AI talking heads are saying these days, and that’s valuable. I make an effort to seek out the more skeptical POV on AI to avoid being in an echo chamber, and I think he’s one of the most reasoned skeptics (unlike many of the others that I won’t name because I want to keep this positive).

Here are a few highlights, but the whole thing is worth your time:

OpenAI may have massive mindshare, but not a durable moat 🏰

Ben Evans: That’s the puzzle. This great strategic problem for OpenAI is you’ve basically got commodity technology. You don’t have a network effect. You’ve got 900 million weekly active users, but most of them are not using it every day and can’t think of anything to do with it. Only 5% of them are paying for it. The usage is like a mile wide but an inch deep. You’ve got to swap the mindshare and momentum you have for something more durable.

They did this video sharing app at the end of last year that didn't work. There's an App Store—or are we on the second or third App Store now? I've lost count. There's an e-commerce integration, but guess what? E-commerce integrations are really hard and complicated because there are millions of merchants and billions of SKUs. Google and Meta have failed at that twice. It turns out that OpenAI went, "No, we probably can't build that ourselves right now." So there's this challenge of how you get from having mindshare and one of the good models to having some kind of a durable platform business where you've got developers, users, and corporate accounts—something that's locked in and doesn't just depend on you having the best model next week.

As a power user of multiple AI products, it's easy to forget: for most people, usage is shallow, differentiation feels small, and they don't really know what to do with it.

The world outside my info-circle is still very different.

AI product teams are reacting to research, not steering from user needs 👨🔬🧪

Ben Evans: The way it works is you turn on your computer in the morning and you’ve got an email from the research group that says, ‘Hey, guess what? We’ve got this cool thing.’ Then your job is to go and do something with it. You get the email saying we’ve got a new voice model, and it’s like, ‘Okay, I guess we’re adding a microphone button today.’ You start from the technology; you don’t control the product strategy. That’s how science works, but you don’t know what’s going to get built.

…This is inherent in where we are: this technology is continually shifting, and you don’t know what it will be able to do next week. You’re a strategy taker, not a strategy setter.

Obviously, Sam and Dario and so on are setting that fundamental research strategy, but the thing comes back in six months and it may work or it may not. I opened the last essay I wrote taking that quote from Simo and comparing it with Steve Jobs famously saying you can't start with the technology and work forward to the product; you've got to start with the user experience and work backwards to the technology. This is inherent in where we are: this technology is continually shifting, and you don't know what it will be able to do next week.

This is something we’ve seen a lot in the past, especially with the Apple vs tech research labs. Research-driven labs come up with very impressive stuff. They make great demos. But rarely products.

Starting with real user needs — even ones users don't know they have yet (the old Henry Ford "faster horse" line 🐴) — is what makes the best products. And the most defensible.

AI will likely mean more software, not less 💾

Matt Turck: To the point you're making, presumably you're not in the camp of believing that people are going to be building their own software with Claude Code.

Ben Evans: […] AI coding means coding is way cheaper and easier. There’s a whole bunch of stuff you couldn't do in software that now you can. There will be way more software, and that will pick up many more of those use cases that weren't automated before, either because they were too small or because you actually couldn't automate that thing with traditional software. [...]

Matt Turck: So what do you think happens to SaaS?

Ben Evans: Way more software. But as an independent category in stock markets? We have to unpick that. If it’s much cheaper to write software, there will be more of it. Who will be doing that? It’s a combination of who understands how software works and who has understood the problem. Frame.io was not made by some person working at a video production suite because that’s not how they think; they’re not product people. There’s a different skill to working out what the code should be doing.

There will be way more software both for stuff that doesn’t need AI except to create it, and stuff that needs AI to do the new thing. Some incumbent software will go away—big expert systems where an LLM can do it better. This seems like a repeat of SaaS. SaaS meant you had two orders of magnitude more software, and some incumbents got completely screwed. When we went from mainframes, the big company had five pieces of software. With on-prem, you had dozens. With cloud, you have hundreds. You should presume with this you’ll have way more software. [...]

Most SaaS companies are database wrappers where somebody realized there is a problem and a way of turning it 90 degrees. The hard part was not writing SQL queries on AWS. The hard part of writing software is all the other stuff: what should the code be doing, how do we tell people to use it, and what should we charge?

Cheaper software creation expanding the overall surface area is fairly consensus. Coding being the least important part of building a successful software company is much less so. Just look at public software valuations right now. Time will tell.

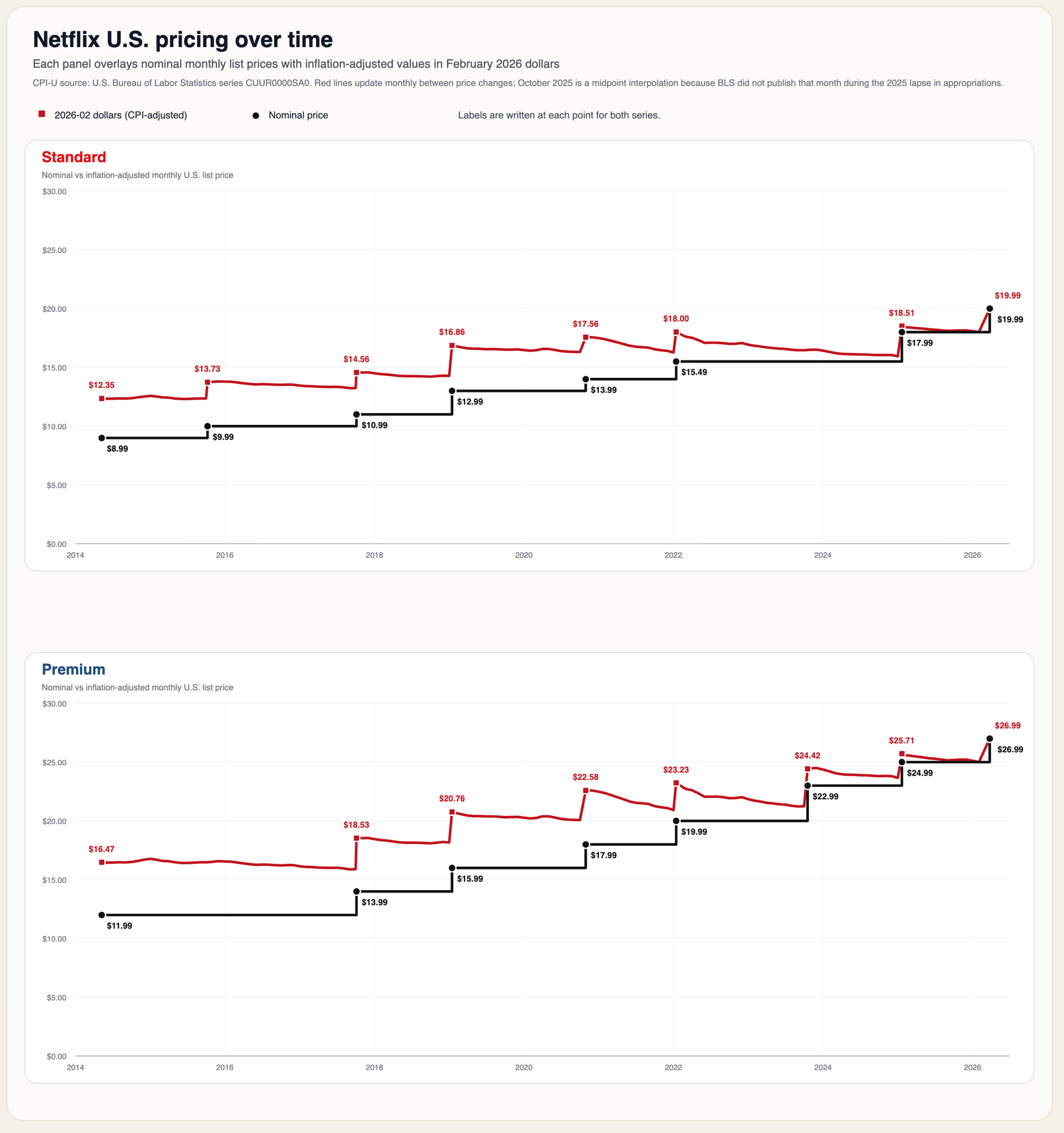

📺 Inflation-Adjusted Netflix pricing since 2014 📊

The idea for this came from Paul Crowley. He asked Claude to create a graph of Netflix pricing in the U.S. over time, along with the inflation-adjusted prices in today’s dollars.

Because Claude usage limits are so tight these days, I asked Codex to build a more elaborate version for both the Standard and Premium options:

Look at that inflation post-2020!

The adjusted Standard pricing went from $12.35 in 2014 to $19.99 in 2026, and from $16.47 to $26.99 for Premium. That’s 62% and 64% increases, respectively.

Do you think that’s a lot?

Honestly, probably a good value considering how Netflix’s product and catalog have evolved since 2014, and how expensive other forms of entertainment are. You can watch films and TV shows with your family for a whole month for less than one restaurant dinner or a night at the theater.

I suspect that for most Netflix customers, it’s one of their lowest cost/hour sources of entertainment.

I just wish Netflix made better original content. When’s the next Dark (2017), or Bojack Horseman (2014), or Narcos (2015), or The Queen’s Gambit (2020), or Mindhunter (2017) coming out? It’s been a while since they made things on that level ¯\_(ツ)_/¯

🧪🔬 Liberty Labs 🧬 🔭

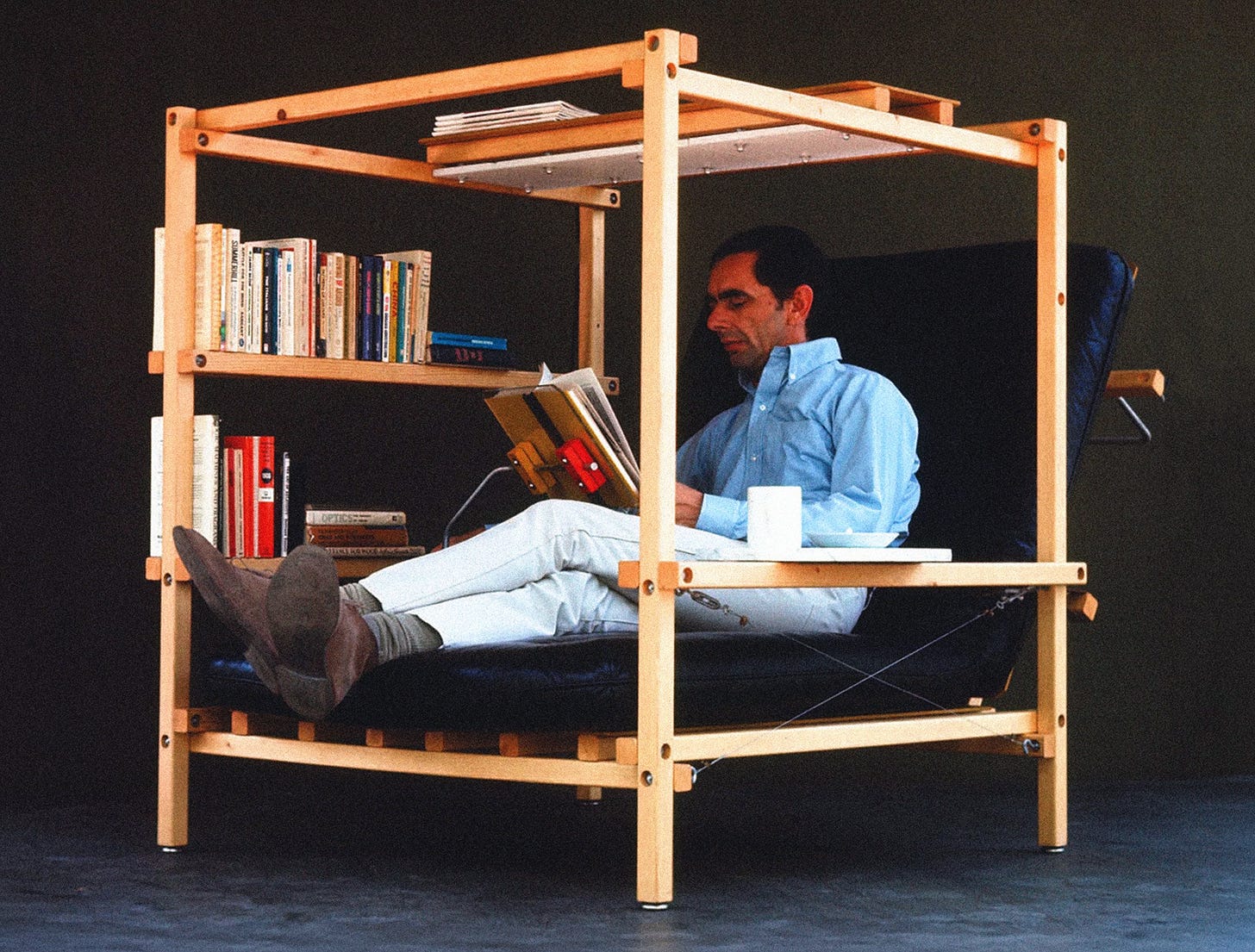

📚🪑☕ Ken Isaacs' Superchair from 1955 #LifeGoals

What more can you ask for?

It was originally conceived in 1955 and then formalized for production in 1967:

Built-in book rest, shelves, lamp, drink tray, and a seat back that folds into a bed.

A place for "inventive work and the individual search for peace of mind", as he put it.

It was meant for people to build it themselves, hence the almost unfinished look. Blueprints were published in Popular Science in 1968.

Hey IKEA, are you seeing this?

h/t Oliver

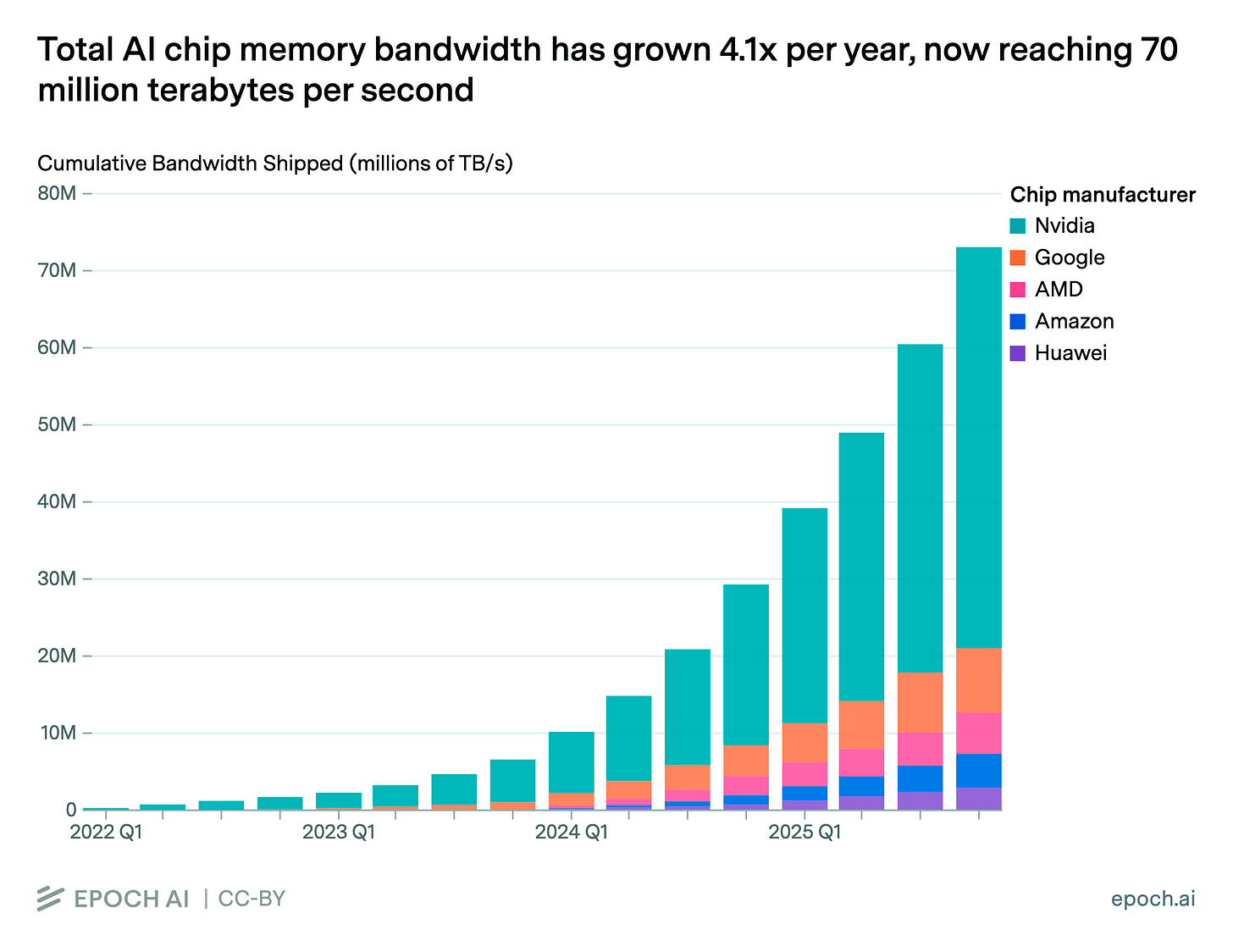

‘Total AI chip memory bandwidth has grown 4.1x per year, now reaching 70 million TB/s’ 🐜🐜🐜🐜🐜🐜

How much aggregate memory bandwidth is that?

“That’s around 300,000x more data per second than global internet traffic.”

🤯

Nvidia's dominance is clear. Huawei barely registers, despite the effort.

“Why track this? AI inference is frequently bottlenecked on memory bandwidth, not compute. Aggregate HBM bandwidth is a rough proxy for the world’s total capacity to serve AI models.” Source.

💻 Microsoft: We Will Make Windows 11 Suck Less 💾

Maybe what it took for Microsoft to wake up and take Windows quality more seriously was for Apple to release a $600 laptop — the MacBook Neo — that reviewers are calling the best value laptop on the market.

In a blog post, MS committed to fixing a lot of annoyances for Windows users:

Integrating AI where it’s most meaningful, with craft and focus: You will see us be more intentional about how and where Copilot integrates across Windows, focusing on experiences that are genuinely useful and well‑crafted. As part of this, we are reducing unnecessary Copilot entry points, starting with apps like Snipping Tool, Photos, Widgets and Notepad.

They finally understood that people were tired of getting Copilot crammed down their throats, Clippy-style! 👀📎

Reducing disruption from Windows Updates: Receiving updates should be predictable and easy to plan around, so we’re giving you more control. This includes the ability to skip updates during device setup to get to the desktop faster, restart or shut down without installing updates and pause updates for longer when needed, all while reducing update noise with fewer automatic restarts and notifications.

That’s been going on for decades at this point!

More taskbar customization, including vertical and top positions: Repositioning the taskbar is one of the top asks we’ve heard from you. We are introducing the ability to reposition it to the top or sides of your screen, making it easier to personalize your workspace.

What a concept!

Improving system performance: Reducing resource usage by Windows to free up more performance for what you’re doing. Improved memory efficiency, lowering the baseline memory footprint for Windows. More consistent performance, even under load. Reducing interaction latency.

Why hasn’t this always been a priority?

Increasing OS, driver and app reliability: Strengthening the Windows foundation by reducing OS level crashes, improving driver quality and app stability across our ecosystem so PCs run smoothly and reliably every day.

Creating easier, faster, and more stable connections with Bluetooth accessories. Fewer USB-related crashes and connection loss.

Anyway, these are just my highlights, the full list is here.

This reminds me of when Apple released its Snow Leopard OS back in 2009. They marketed it as a ‘polish’ release that wasn’t focusing on new features, but rather on making what’s already there better and more reliable. I wish Apple would also go back to this.

🎨 🎭 Liberty Studio 👩🎨 🎥

🌒🎶 Returning to The Dark Side of the Moon & The Snob Sandwich 🥪

Growing up, I knew that Dark Side of the Moon was one of my dad’s favorites. He rarely listened to it, but I knew he considered it special. So when I got into music, it was one of the first things I listened to over and over again.

It blew my tiny mind.

It was unlike anything else I was hearing on the radio or finding in my dad’s records and CDs (this was before I started my own collection, which eventually grew to about 1,500 CDs before I switched to MP3s and then streaming).

As I discovered other things, I did what many music fans do and stopped listening to some of my early favorites. I think I subconsciously started seeing them as “for beginners,” the training wheels of music.

Even with Pink Floyd, I did what a lot of fans seem to do and got more into Wish You Were Here, Meddle, and Animals. I also found other prog and art-rock bands that were more obscure and therefore cooler.

I’ve heard this described as the ‘snob sandwich’ 🥪

It’s similar to the midwit curve: beginners on one side, experts on the other, and the snobs in the middle. The idea is that with many things, what beginners love often gets rediscovered by more experienced people later on, but only after a phase of looking down on it for being too obvious.

I lived this with the Beatles, one of my first musical loves. For years, I barely listened to them because they felt too “obvious.” But every so often, I come back and they blow my mind all over again.

The nice thing about coming back to music after so long is that I’ve changed, so I hear it differently. Back when I first heard Pink Floyd, I didn’t play guitar and had far fewer musical points of reference. On recent Dark Side listens, I noticed all kinds of wonderful guitar solos and basslines that went right over my head as a kid.

One thing that works against Dark Side now is that it’s really meant to be heard as an album. It doesn’t hit the same way when random tracks are sprinkled into a shuffled playlist, which is how a lot of people seem to listen to music now. There are deliberate buildups and releases.

The crescendos hit differently when you’ve earned them. 💥

Granted, some of the album’s gimmicks and long intros work better the first time you hear them than the 1,000th (those clocks 🕰️), but see it as training for your attention span.

When’s the last time you listened to The Dark Side of the Moon from beginning to end?

Why not today? 🌒

The podcast with Benefict Evans resonated with my understanding. Thanks for sharing. Trade carefully. We are close to the limit.

Fantastic edition!!

Liberty + coffee + jazz vinyl in the background = great saturday