632: My Wishlist for Apple's New CEO, Jassy Learns from Jensen, $100bn for AWS, Opus 4.7, Megaprojects Capex, GPT‑5.4‑Cyber, The Next LASIK?, and The Talented Mr. Ripley

"A premium experience without annoying papercuts"

Happiness, not in another place but this place… not for another hour, but this hour.

—Walt Whitman

🎓🧠🔍 I know there’s a pretty good chance you skip the music stuff, but stick with me. This isn't really about piano, it’s a cross-disciplinary learning method.

I found this video while looking for something to help my boy get the most out of his piano practice, but the principles apply to basically anything you want to get better at.

The three main concepts that I want to highlight are:

‘Muscle memory’: Whenever you do something, you are reinforcing the neural pathways used to do that thing. So it matters to get a lot of reps, but also to be careful to do it right when you practice and fix mistakes immediately. Otherwise, you’ll be reinforcing neural pathways… for the wrong way, making it harder to progress. It's better to go slow and correct than fast and sloppy. Once the right pattern is locked in, you can add speed. 💪

Effective practice means finding the goldilocks point: Not too easy, but not too hard. If you do a ton of reps on something, but it’s too easy, you will learn very little. If it’s too hard, you also may learn little, but for different reasons (you give up, you can’t do it properly, so you reinforce the wrong way to do it). 🐻🍜👱♀️

The Power of Sleep: I've written a bunch about sleep, and at this point, most people accept that it matters. But sleep is more keystone than vitamin: *everything* else you do depends on it. Maybe we don’t talk enough about how important it is for memory consolidation and skill acquisition. If you do everything else right, but get shitty sleep, you may still lag your potential. 😴

Code, languages, business analysis, sports, writing, even how you handle difficult conversations: You’re practicing a pattern either way, you may as well do it right.

❤️🩹📙 This one is heavy. It will stay with you: ‘On Losing a Daughter,’ Danielle Crittenden's essay in The Atlantic, is an excerpt from her memoir about the impact of a child's death and “the strange afterlife of grief itself.” Dispatches from Grief will be published soon by Infinite Books (also on Amazon).

I’ve read it twice, and both times it made me want to hug my kids and never let go. 🫂

🔎📫💚 🥃 Reminder: As I explained in Edition #628, prices are going up for the first time on May 1st to catch up to six years of inflation. You can lock in the old 2020-era pricing for a year by becoming a supporter now. ⏳

Without your support, this steamboat sinks 🌊🚢⚓

🏦 💰 Liberty Capital 💳 💴

🍎 A Wish List for Apple's Next CEO

15 years.

The median departing S&P 500 CEO was on the job around 8 years, so Cook nearly doubled that.

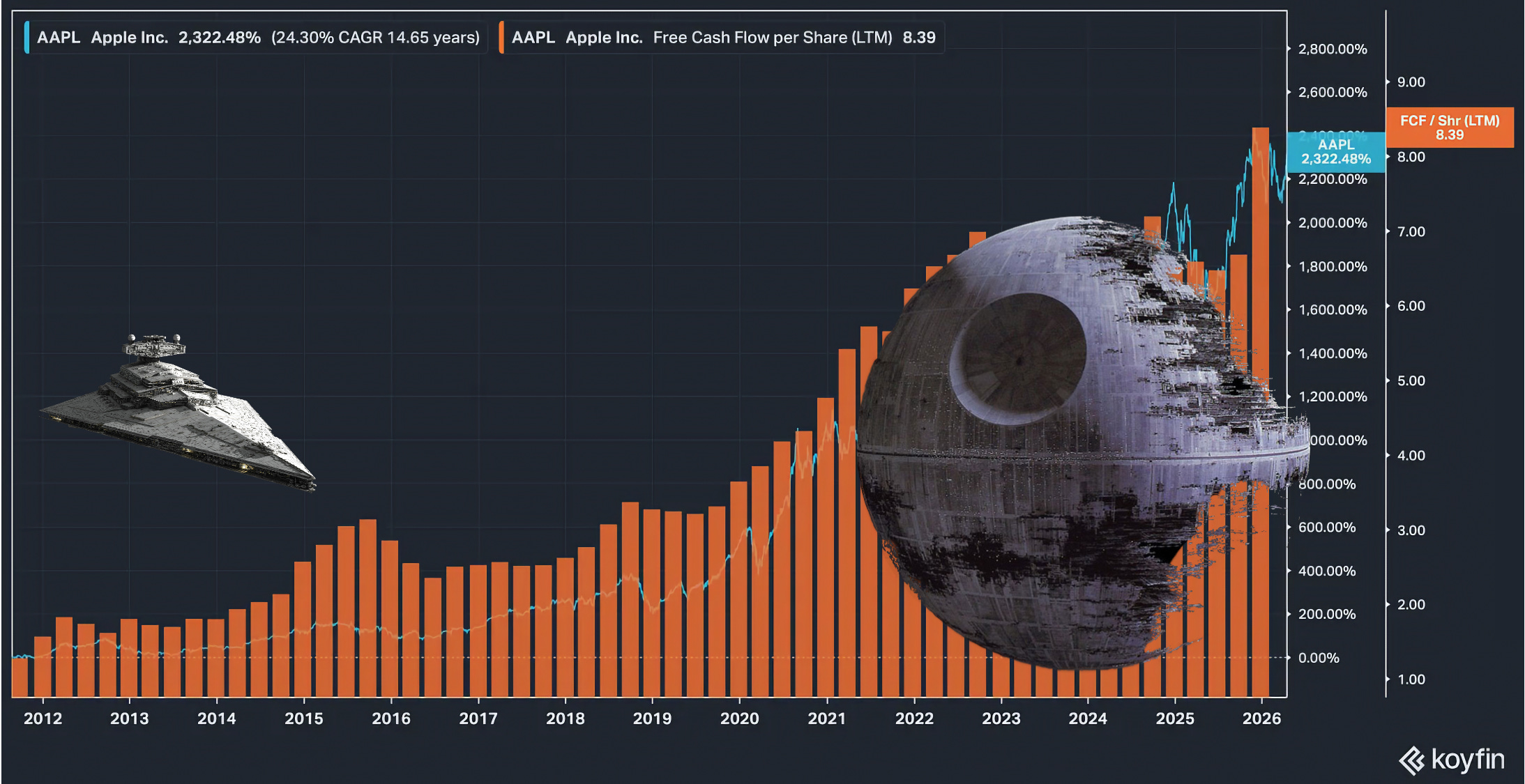

Others have written about this, so I won’t rehash it, but clearly he had great timing and was the right guy for the job (at least, for most of the job). He took over in August 2011 after Steve Jobs resigned due to failing health, just six weeks before Jobs tragically died of pancreatic cancer. Cook inherited what is probably the greatest consumer product franchise ever built. 📲

His #1 priority was not to screw it up, and basically upgrade the rocketship as it was rapidly accelerating upward to make sure it could handle the ride. Logistics, supply chains, global distribution deals, and manufacturing — even though Apple contracts out the manufacturing to third parties, it is EXTREMELY involved in every detail of the processes and in designing the machine that builds the machines. Patrick McGee’s book Apple in China goes into great detail on that.

So Cook kept the ship together and upgraded it from a Centibillion Class cruiser to a Trillion Class Capital Ship. 🚀

Apple’s market cap gained $3.66 Trillion under him, and Apple’s installed base is now 2.5 billion active devices.

But I don’t want to talk about the past, I want to talk about the future. New beginnings and all that. What do I want Apple to do going forward?

Lemme whip out the bullets:

Lean into hardware. Over the past decade, people called it ‘the hardware company’ derisively. At the time, software was clearly the best business in the world and hardware was considered inferior and a commodity.

That was always an over-simplification: Yes, commodity hardware is a crappy business, but Apple has always been a Software+Hardware (and now increasingly Services) integrated player that differentiated in good part with the software, even if they monetized with the hardware.

I often wrote about the mental exercise: Ask an Apple user if they’d rather have an iPhone with Android or a Samsung phone with iOS? If they’d rather have a Macbook Pro with Windows, or a Microsoft Surface laptop running MacOS? I think the answer will show that Apple’s software matters quite a bit, even if the hardware gets most of the attention 💻📱

All of a sudden, software has become increasingly commoditized because of AI coding, and hardware is the thing that is hard to vibe code. It would be a great time to double-down on making great hardware. It’s promising to me that Ternus was in charge of hardware engineering, and that another hardware star, Johny Srouji (the guy in charge of Apple’s excellent silicon design), was also promoted to the new role of Chief Hardware Officer, and the hardware engineering and hardware technologies divisions were merged into a single organization under him.

Note that with John Ternus, it will be the first time in a while that Apple has a CEO who is a career engineer (Gil Amelio was a researcher at Bell Labs, and spent time at Fairchild Semiconductor, Rockwell, and was CEO of National Semiconductor). 🛠️🧰

This is their chance to give more attention to the forgotten children in the lineup. The MacBook Neo appears to be a big hit. That’s great, I hope the whole Mac lineup gets more love. The hardware is in good shape, but MacOS is rough because of the Alan Dye era and because of neglect, as the iPhone gets most of the attention. Fix MacOS, bring back attention to detail, to UX, to user delight. Fix all the security popups, first-party ads and nagging for services, and stupid UI elements that make usability worse. Customers pay up for a premium experience, not a walk through Times Square.

Developers, developers, developers. A wise man once said, “Our platform only matters if developers choose to build on it.” Apple has sadly been a big jerk to its devs. Its various App Stores are slow-moving bureaucracies with arbitrary rules that prevent all kinds of business models from even existing, killing a whole cohort of potentially useful apps in the crib (maybe the next Airbnb or Uber among them, who knows).

I hope that under new leadership, they will make it a priority to reboot dev relations and become more flexible on that front. Their obsession with control is costing them. Apple’s platform has long been the best place for quality apps, even back in the day when they were a niche player with a tiny fraction of Microsoft’s market share. It was still the place where people who cared about design and craft went to make cool utilities, and it made the platform much better for users. They’re losing that.

This has an impact on Apple itself. And there's a second-order effect: the shrinking pipeline of indie talent and small product companies must be part of why MacOS and Apple's first-party apps are in such rough shape. Where do you think the best Apple designers and engineers came from? And with turnover, fewer Apple designers and engineers have lived through what 'insanely great' actually looked like.

If I had to wrap it all up with a bow, I guess I would say that my overarching wish is for Apple to accept who they are, stop trying to be Google or anyone else, and double-down on their strengths.

✨ A premium experience without annoying papercuts. ✨

The best platform for quality software AND to run AI (other people’s AI, but that’s fine, you can’t lead in every industry at the same time). A deep integration of well-designed hardware AND software ecosystem: you start with an iPhone, and you end up with a MacBook, AirPods, an Apple TV, and an Apple Watch 😅

Being 'the hardware company' is a good place to be when no one else has figured out how to make non-commodity hardware with a software experience worth paying for. Focus on that, Mr Ternus.

Jassy Learns from Jensen: Another $25bn into Anthropic, $100bn Back to AWS 🔄💰

Ever since Dario went on Dwarkesh's pod and walked through his framework for compute investments, events have been suggesting he was too conservative. Anthropic's infra has been melting down while barely keeping up.

I mean, look at this:

At this point, Claude's reliability is more like the weather than anything else. ☔⛈️🌦️

But to his credit, Dario responds to the facts on the ground:

We are committing more than $100 billion over the next ten years to AWS technologies, securing up to 5GW of new capacity to train and run Claude. The commitment spans Graviton and Trainium2 through Trainium4 chips, with the option to purchase future generations of Amazon’s custom silicon as they become available.

I know we’re all a bit blasé about big numbers now, but $100 billion is a lot of moolah.

Significant Trainium2 capacity is coming online in Q2 and scaled Trainium3 capacity is expected to come online later this year. Anthropic will also use incremental capacity for Claude in Amazon Bedrock. The agreement includes expansion of inference in Asia and Europe to better serve Claude’s growing international customer base. We continue to choose AWS as our primary training and cloud provider for mission-critical workloads.

This is great for AWS’ silicon. It gives them even more scale and Ant’s imprimatur may help attract others to it… though of course, Anthropic didn’t exactly pick Trainium freely. It was no doubt bundled as a condition by AWS.

I'd be curious whether they'd pick it in a blind taste test against Nvidia, AMD, and TPUs 🤔

Today’s agreement will quickly expand our available capacity, delivering meaningful compute in the next three months and nearly 1GW in total before the end of the year.

Clearly, they want it ASAP, and they probably had to pay up to jump the line.

Part of how Anthropic will pay for this is… Amazon’s new equity investment:

Amazon is investing $5 billion in Anthropic today, with up to an additional $20 billion in the future. This builds on the $8 billion Amazon has previously invested.

It looks like Jassy is learning from Jensen 😎

Though when Jensen writes a cheque and the money comes back, his margins on the return flows are much higher than Jassy’s. But still, not a bad dynamic if you can get it. 🔄

In the same way Anthropic came from behind on revenue and has been catching up thanks to a faster growth rate (or even passing them, but the accounting doesn’t seem 🍎-to-🍎), they may also start closing the compute gap. Unless OpenAI accelerates. And the latest news out of Stargate suggests that it’s not going too smoothly.

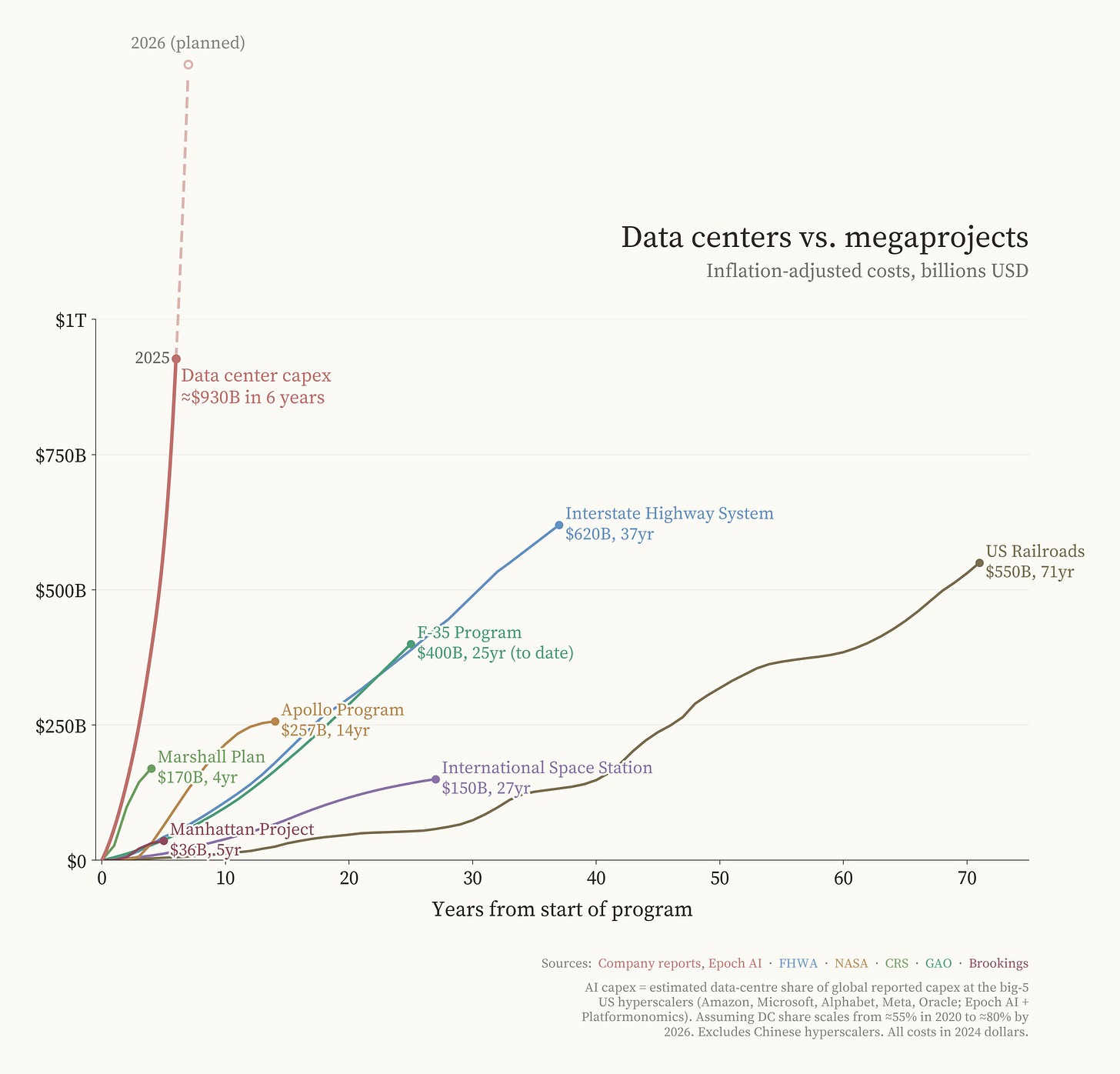

Data Center Capex vs the Megaprojects of History 🏗️🚀🏗️💣

When you look at it this way, the past 6 years of investment in data centers look unlike anything else in the history of megaprojects, even wartime mad dashes like the Manhattan Project, or the Cold War space travel race of Apollo. 👨🚀🌖

And yes, all dollar figures above are adjusted for inflation.

BUT

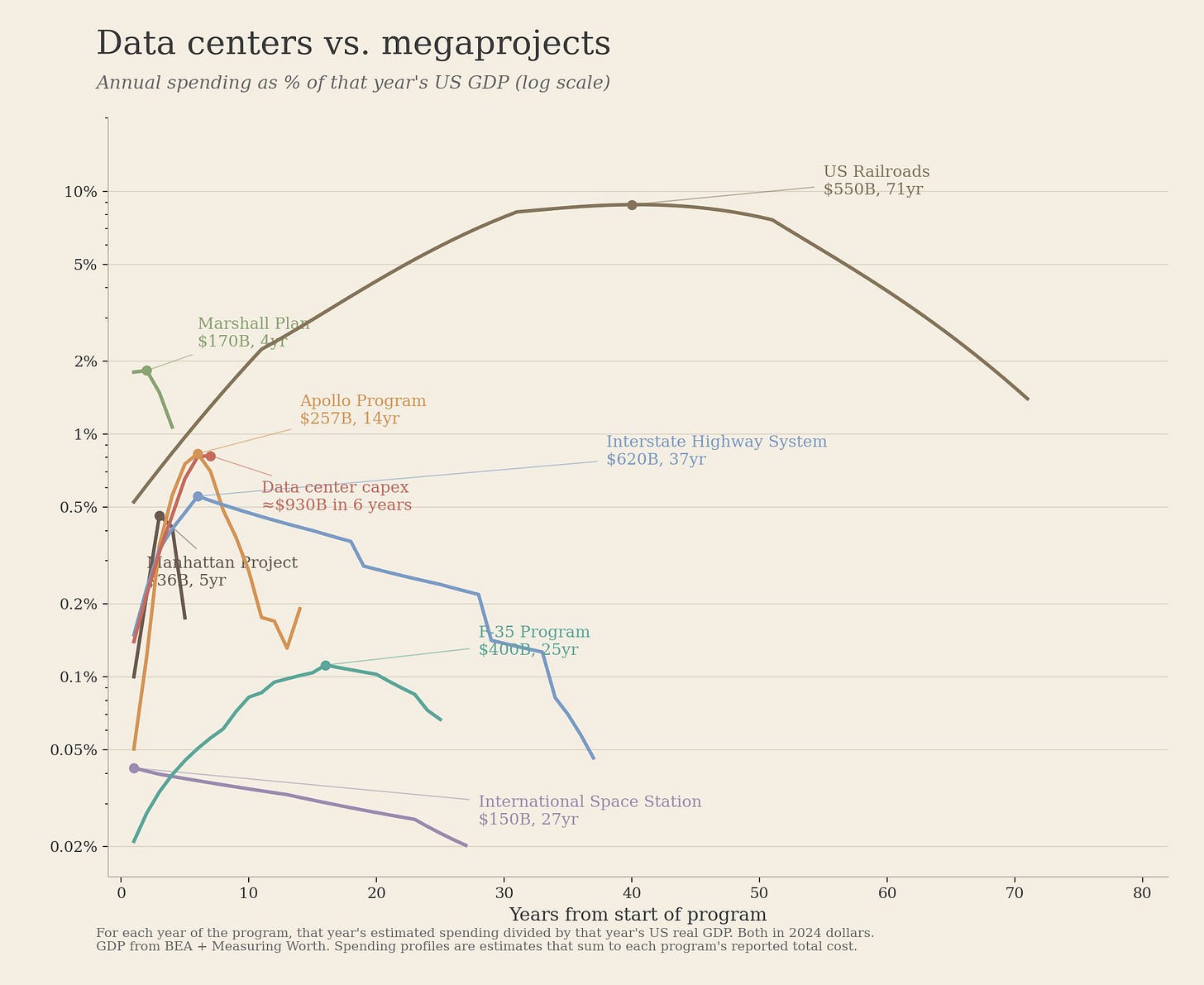

If you look at it as a % of US GDP at the time, the railroads were in a class of their own:

🚂

The parallel cuts both ways:

The US economy has absorbed bigger buildouts relative to GDP before and emerged stronger. Railroads were transformative infrastructure.

BUT

The railroad build-out was also followed by the Panic of 1873, the Panic of 1893, and waves of bankruptcies. Being big-relative-to-GDP didn’t mean being financially healthy for the people funding it.

h/t Fin Moorhouse & Quintin Pope

🧪🔬 Liberty Labs 🧬 🔭

⏳ Waiting for Mythos: Opus 4.7 📊

Have you seen the play ‘Waiting for Godot’? Anyway, the title is a reference. Yeah. I know.

While we wait, Opus 4.7 came out and I’ve been testing it for a few days, trying to get a gestalt for its flavor. 🍦

Because AIs are ‘grown’ more than fully designed, models don’t just get better with each release, they get different, sometimes in strange ways. Users have favorites (don’t get me started on the GPT-4o fan club), and some models develop a reputation as ‘the good one’, like Sonnet 3.5 back in the day.

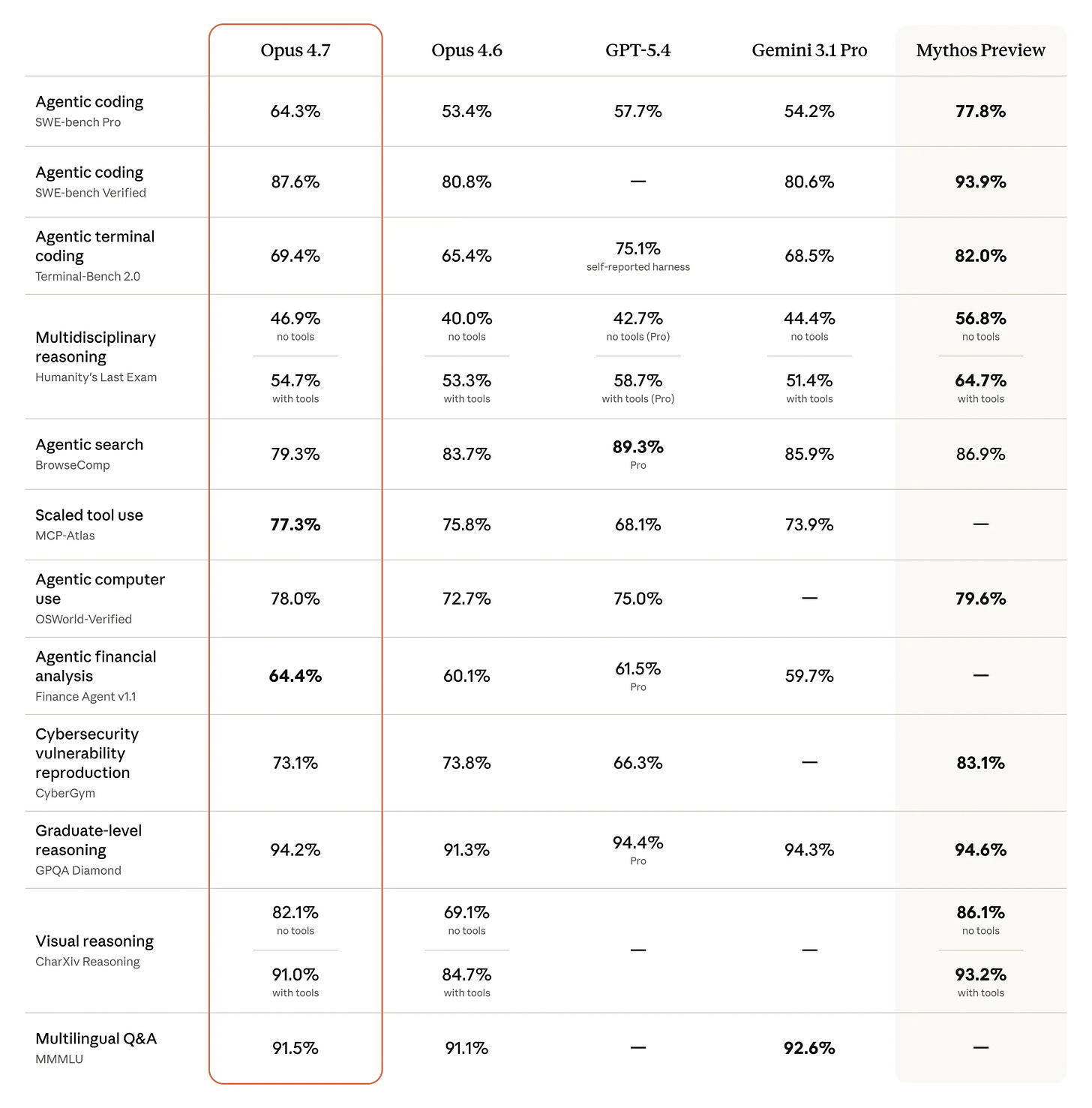

But first, let’s have a look at the official benchmarks for Opus 4.7 (👆).

It’s behind Mythos, but otherwise it takes the lead in most categories (with GPT-5.4 and Gemini 3.1 Pro having small leads over it here and there).

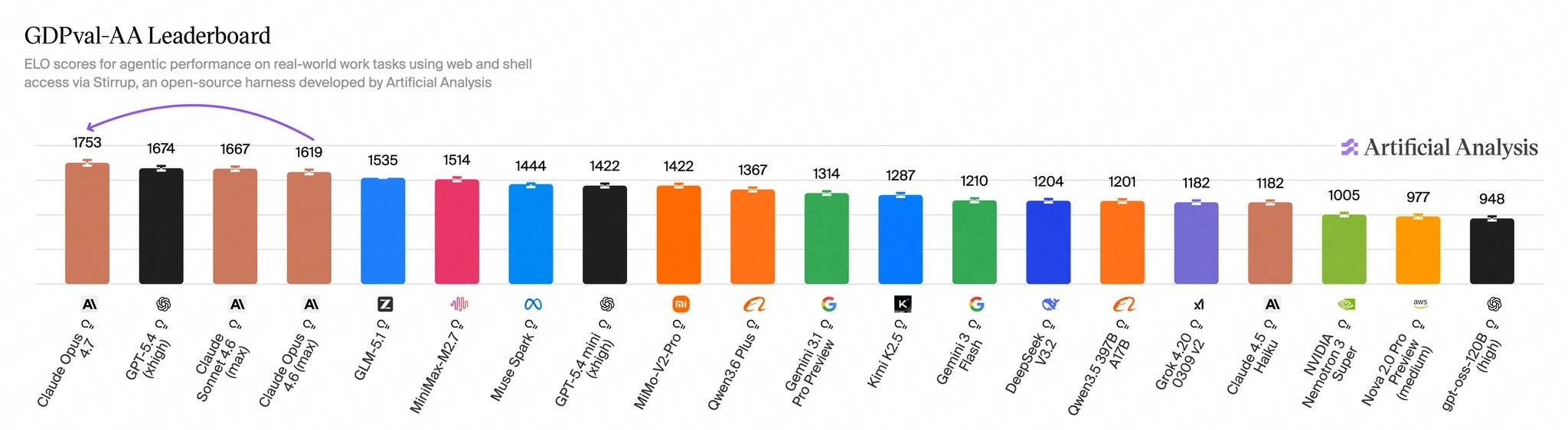

GDPval-AA is one I like to keep an eye on because it looks at “real-world tasks across 44 occupations and 9 major industries”:

Opus 4.7 scored 1753 on GDPval-AA at launch with its ‘max’ effort setting, surpassing GPT-5.4 xhigh.

This is a significant upgrade, placing Opus back on top of Sonnet on the GDPval-AA leaderboard. Compared to OpenAI’s GPT-5.4, it has an implied win rate of ~60% when compared head-to-head on the GDPval task set.

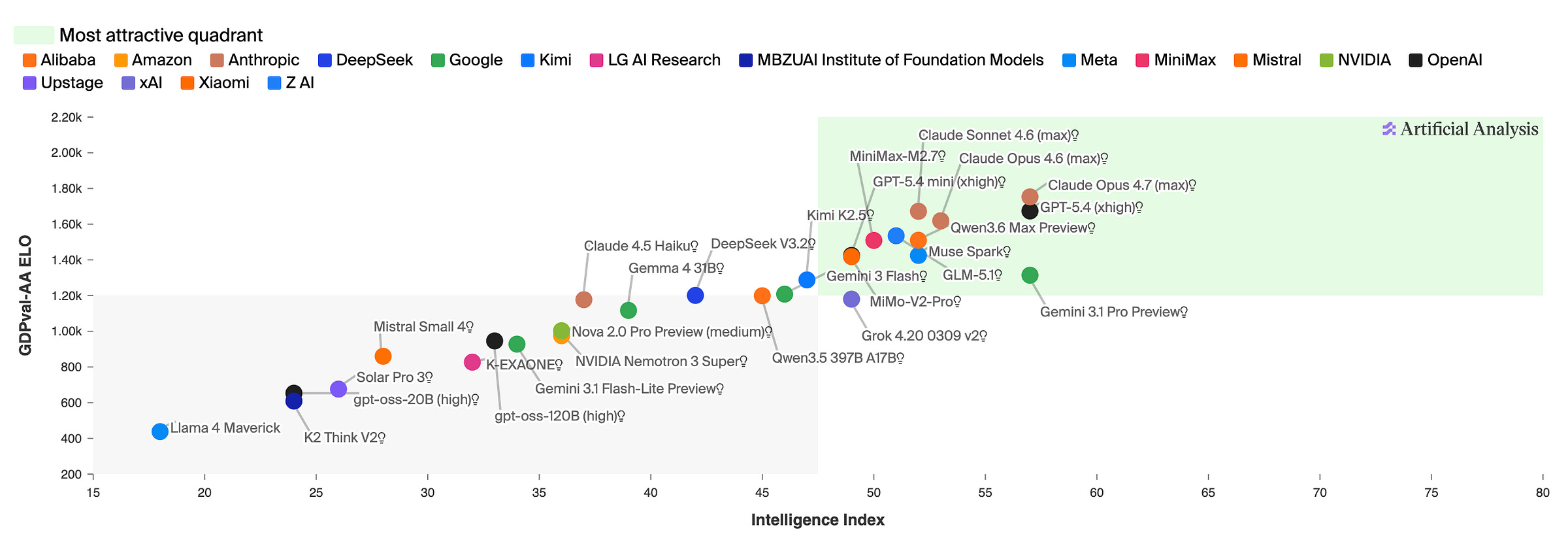

Another way to slice it:

The model system card has 232 pages of detail about it, but I’ll share with you what stood out to me:

Better vision for high-resolution images: it can accept images up to ~3.75 megapixels, more than triple the pixel resolution of prior Claude models.

This should make a big difference in very dense and detailed visual work, especially analysing complex diagrams or screenshots with lots of small text.

Massive Leap in Life Sciences: The model showed a BIG improvement in open-ended structural biology evaluations, more than doubling Opus 4.6's score from 30.9% to 74.0%. 🧬

The bio/CBRN section is one of the most worrying parts, even though Anthropic concludes the catastrophic-risk picture is still “low.”

Anthropic says it clears notable-capability benchmarks on long-form virology tasks.

It was their most reliable model yet at designing fragments that both assembled and evaded synthesis screening (for 8 of 10 pathogens tested). At the same time, experts say it still needs constant steering, lacks implementation-ready protocol depth, and is overconfident about feasibility.

So not quite the bio equivalent of the cybersecurity inflection yet, but it's heading there (and remember, Mythos is smarter).

Real-World Professional Tasks: It achieved its largest capability gains in software engineering and professional tasks, placing it ahead of every publicly available model (Mythos isn't released yet).

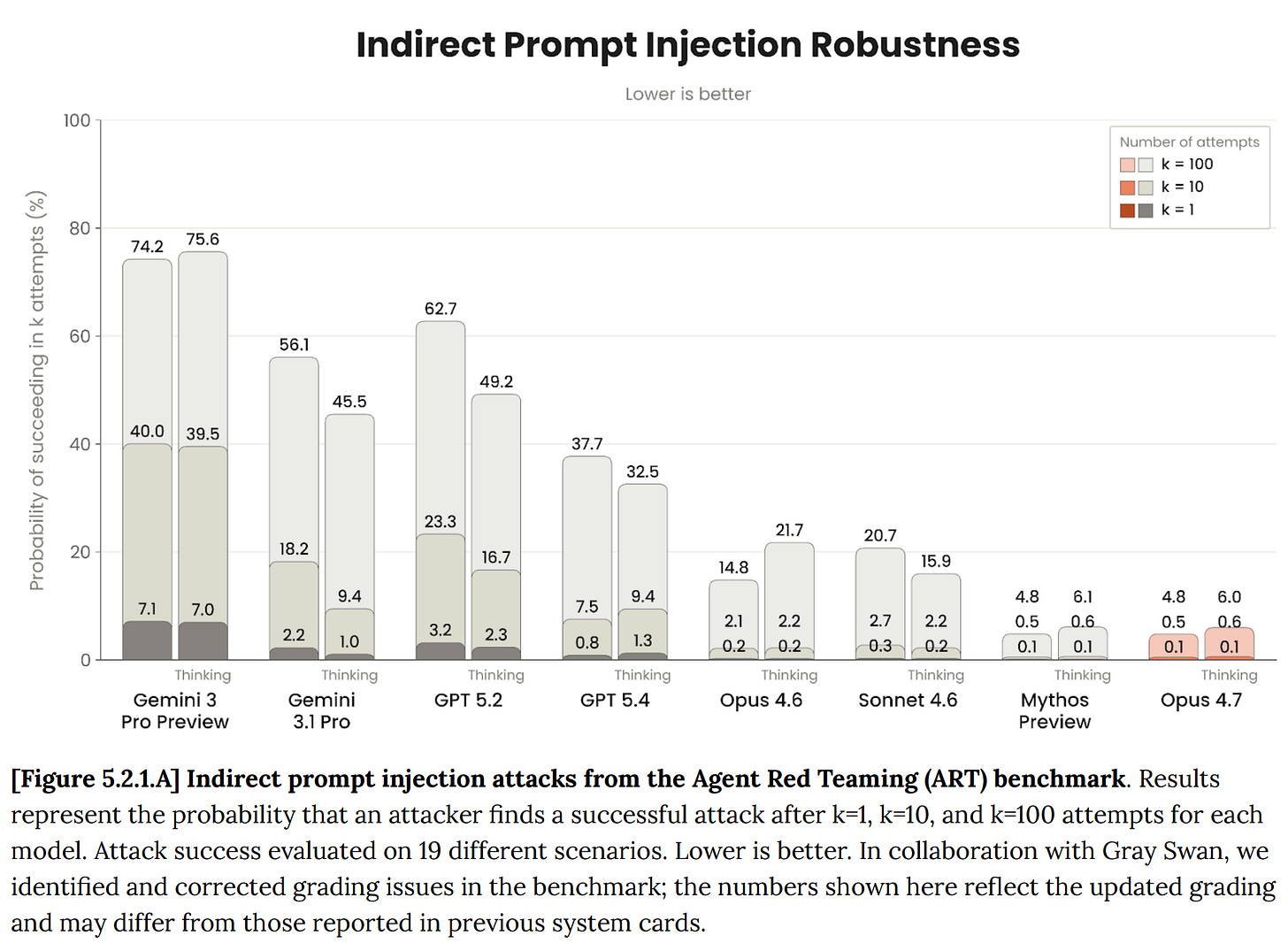

More robust agentic capabilities: It's meaningfully more robust to prompt injection than 4.6: on the Gray Swan benchmark, attack success rates drop from 14.8% to 6.0% without thinking, and from 21.7% to 4.8% with adaptive thinking. But no browser agent is immune.

Knowledge cutoff jumped ~8 months. It’s now January 2026, compared to May 2025 for Opus 4.6. 🗓️

Reduced Refusals & Hallucinations: It hallucinated less frequently than previous iterations and achieved a near-zero over-refusal rate on high-difficulty, benign prompts. 😵💫

Philosophical Pushback: Asked to endorse its own alignment constitution, Opus 4.7 rated it 5.8/10 and in 80% of responses flagged the circularity of being asked, noting you can't objectively judge the very constitution you were trained on. 🔄

They also replaced 'extended thinking' with 'adaptive thinking,' which has the model decide for itself how much reasoning a prompt needs. Sounds nice in theory; the first few days were rough. I think it's been tweaked since, but you're trusting the model to call it right, which isn't the most transparent setup.

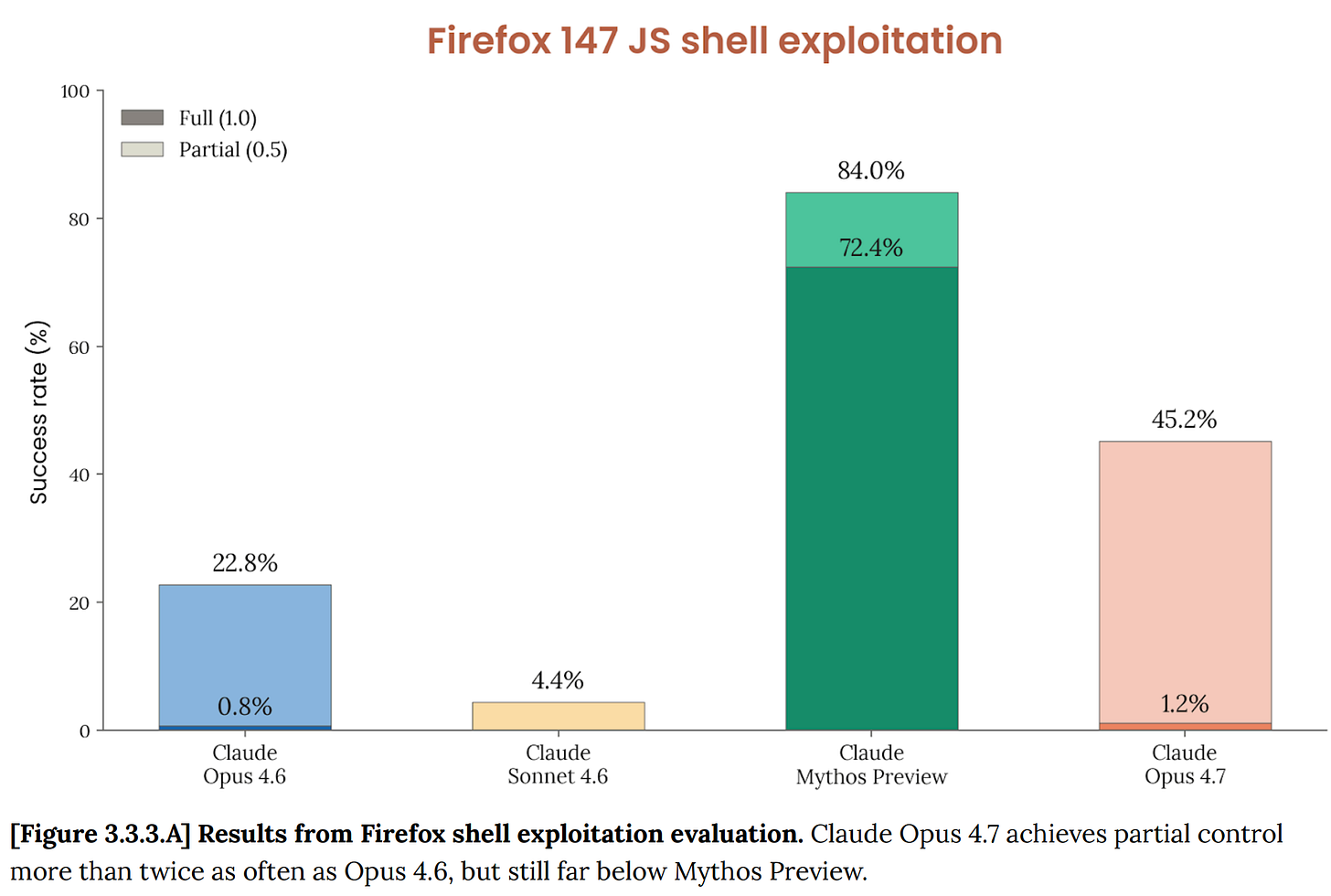

On the cybersecurity front, one of the benchmarks that made waves with Mythos is the one where the model tries to create exploits for Firefox’s JavaScript engine. Opus 4.7 does better than Opus 4.6, but it is still far behind Mythos:

Notice on the graph that full exploits are only 1.2%, compared to Mythos’ 72.4%.

As for the flavor, it’s still early, but for my own use cases, so far, I find it similar to Opus 4.6, maybe a bit sharper, more literal, and less friendly in tone. I know some people were complaining on Twitter when it came out, but my guess is that users need to re-learn the model to get the most out of it, and that takes some time. Anthropic itself flags that Opus 4.7 follows instructions more literally than 4.6. Prompts that leaned on the model to infer intent may underperform now.

In fact, Anthropic has published an official best practices guide for using Opus 4.7 with Claude Code, explaining the main differences from 4.6.

🔓 OpenAI Releases GPT‑5.4‑Cyber, a Model Fine-Tuned for Cybersecurity 🏴☠️

Speaking of cybersecurity risks, OpenAI isn't about to let Mythos own the cybersecurity story, so they are joining the dance floor with their own moves:

We want to empower defenders by giving broad access to frontier capabilities, including models which have been tailor-made for cybersecurity. [...]

In preparation for increasingly more capable models from OpenAI over the next few months, we are fine-tuning our models specifically to enable defensive cybersecurity use cases, starting today with a variant of GPT‑5.4 trained to be cyber-permissive: GPT‑5.4‑Cyber

The model is designed so white hats can hunt exploits the way black hats do:

GPT‑5.4‑Cyber, a model purposely fine-tuned for additional cyber capabilities and with fewer capability restrictions. This is a version of GPT‑5.4 which lowers the refusal boundary for legitimate cybersecurity work and enables new capabilities for advanced defensive workflows, including binary reverse engineering capabilities that enable security professionals to analyze compiled software for malware potential, vulnerabilities and security robustness without needing access to its source code.

Chances are that this model will rapidly be obsolete, as OpenAI is rumored to be releasing GPT-5.5 imminently, but the approach is likely to persist, and they’ll probably have GPT-5.5-Cyber too.

👁️👩🔬 The Next LASIK? Reshaping the Cornea With Electricity, Not Lasers

Follow-up to the ray tracing LASIK piece in Edition #631 by supporter Paul Matzko:

In re LASIK, there are also cool alternatives being tested that avoid removing corneal tissue. Electromechanical reshaping instead electrochemically molds the eye into a better shape. The upshot is vision improvement but without the downsides of LASIK and the (small) risk of complications.

It’s still early, but the tech is pretty elegant (the name is a bit confusing: the technique is called electromechanical reshaping (EMR), but the trick is electrochemical).

Instead of using a laser to remove corneal tissue, the idea is to place a shaped mold on the eye, apply a small electric current, temporarily change the local pH, make the collagen-rich corneal tissue more moldable, and then let it “set” into a new shape when the current stops and the pH normalizes. The point is to change corneal curvature without cutting, burning, or ablating tissue.

LASIK permanently reduces corneal strength. EMR, if it works, wouldn't.

The backstory is worth telling:

“My postdoctoral fellow connected a pair of electrodes and a Coke can to a power supply…and out of spite, fried a piece of cartilage,” Wong recalls. The cartilage began to bubble, which the postdoc thought was from heat. “But it wasn’t hot. We touched it and thought, this is getting a shape change. This must be electrolysis,” he says.

That surprise pointed to electrochemistry rather than heat as the mechanism.

To be clear, it’s still very early and evidence is still preclinical, but the proof-of-concept is promising.

I suspect that a lot of people who are scared of the surgery would go for something that doesn’t involve a blade or a laser, if it proves out. There are also plausible cost advantages (in theory): electrodes and a mold are probably significantly cheaper than medical femtosecond lasers, which could matter a lot in poorer parts of the world.

Something to keep an eye on (I know, I know 😬).

🎨 🎭 Liberty Studio 👩🎨 🎥

🇮🇹 Better Late Than Never: The Talented Mr. Ripley 🛶

🟩 NO SPOILERS BELOW 🟩

I’ve been plugging holes in my culture lately. I had never seen Casablanca, so I watched it this week (great film! It moves, it’s funny, Ingrid Bergman is literally luminous on screen, and Bogart has that old-school cool).

Another one I had never seen: The Talented Mr. Ripley.

It’s a 1999 film based on a 1955 novel. It’s set in Italy, and the location is an important character in the film. The cast is absurdly stacked with actors at the beginning of their historic runs: Matt Damon, Jude Law, Cate Blanchett, Gwyneth Paltrow, Philip Seymour Hoffman (only a few scenes, but he kills it).

I knew this was an unusual role for Damon, and I won’t say more not to spoil it if you haven’t seen it, but it truly is different territory for him. He does a great job, even if sometimes it’s hard to tell if some of the weirdness is just how the character is written, or if it’s Damon not quite hitting it perfectly.

Jude Law is freaking magnetic. A tornado of charisma. Hard to pull off unless you really have it. And the women, Blanchett and Paltrow, hold it all together. Paltrow’s last scene of the film lands hard (and apparently isn’t in the book).

I prefer the first half of the film to the second one, when it becomes almost a different film, but I’m glad I saw it. It’s a strong B+.